Chapter 1. Introduction

“Science is in danger, and for that reason it is becoming dangerous” -Pierre Bourdieu, Science of Science and Reflexivity

Why an Open Access Textbook on Qualitative Research Methods?

I have been teaching qualitative research methods to both undergraduates and graduate students for many years. Although there are some excellent textbooks out there, they are often costly, and none of them, to my mind, properly introduces qualitative research methods to the beginning student (whether undergraduate or graduate student). In contrast, this open-access textbook is designed as a (free) true introduction to the subject, with helpful, practical pointers on how to conduct research and how to access more advanced instruction.

Textbooks are typically arranged in one of two ways: (1) by technique (each chapter covers one method used in qualitative research); or (2) by process (chapters advance from research design through publication). But both of these approaches are necessary for the beginner student. This textbook will have sections dedicated to the process as well as the techniques of qualitative research. This is a true “comprehensive” book for the beginning student. In addition to covering techniques of data collection and data analysis, it provides a road map of how to get started and how to keep going and where to go for advanced instruction. It covers aspects of research design and research communication as well as methods employed. Along the way, it includes examples from many different disciplines in the social sciences.

The primary goal has been to create a useful, accessible, engaging textbook for use across many disciplines. And, let’s face it. Textbooks can be boring. I hope readers find this to be a little different. I have tried to write in a practical and forthright manner, with many lively examples and references to good and intellectually creative qualitative research. Woven throughout the text are short textual asides (in colored textboxes) by professional (academic) qualitative researchers in various disciplines. These short accounts by practitioners should help inspire students. So, let’s begin!

What is Research?

When we use the word research , what exactly do we mean by that? This is one of those words that everyone thinks they understand, but it is worth beginning this textbook with a short explanation. We use the term to refer to “empirical research,” which is actually a historically specific approach to understanding the world around us. Think about how you know things about the world. [1] You might know your mother loves you because she’s told you she does. Or because that is what “mothers” do by tradition. Or you might know because you’ve looked for evidence that she does, like taking care of you when you are sick or reading to you in bed or working two jobs so you can have the things you need to do OK in life. Maybe it seems churlish to look for evidence; you just take it “on faith” that you are loved.

Only one of the above comes close to what we mean by research. Empirical research is research (investigation) based on evidence. Conclusions can then be drawn from observable data. This observable data can also be “tested” or checked. If the data cannot be tested, that is a good indication that we are not doing research. Note that we can never “prove” conclusively, through observable data, that our mothers love us. We might have some “disconfirming evidence” (that time she didn’t show up to your graduation, for example) that could push you to question an original hypothesis , but no amount of “confirming evidence” will ever allow us to say with 100% certainty, “my mother loves me.” Faith and tradition and authority work differently. Our knowledge can be 100% certain using each of those alternative methods of knowledge, but our certainty in those cases will not be based on facts or evidence.

For many periods of history, those in power have been nervous about “science” because it uses evidence and facts as the primary source of understanding the world, and facts can be at odds with what power or authority or tradition want you to believe. That is why I say that scientific empirical research is a historically specific approach to understand the world. You are in college or university now partly to learn how to engage in this historically specific approach.

In the sixteenth and seventeenth centuries in Europe, there was a newfound respect for empirical research, some of which was seriously challenging to the established church. Using observations and testing them, scientists found that the earth was not at the center of the universe, for example, but rather that it was but one planet of many which circled the sun. [2] For the next two centuries, the science of astronomy, physics, biology, and chemistry emerged and became disciplines taught in universities. All used the scientific method of observation and testing to advance knowledge. Knowledge about people , however, and social institutions, however, was still left to faith, tradition, and authority. Historians and philosophers and poets wrote about the human condition, but none of them used research to do so. [3]

It was not until the nineteenth century that “social science” really emerged, using the scientific method (empirical observation) to understand people and social institutions. New fields of sociology, economics, political science, and anthropology emerged. The first sociologists, people like Auguste Comte and Karl Marx, sought specifically to apply the scientific method of research to understand society, Engels famously claiming that Marx had done for the social world what Darwin did for the natural world, tracings its laws of development. Today we tend to take for granted the naturalness of science here, but it is actually a pretty recent and radical development.

To return to the question, “does your mother love you?” Well, this is actually not really how a researcher would frame the question, as it is too specific to your case. It doesn’t tell us much about the world at large, even if it does tell us something about you and your relationship with your mother. A social science researcher might ask, “do mothers love their children?” Or maybe they would be more interested in how this loving relationship might change over time (e.g., “do mothers love their children more now than they did in the 18th century when so many children died before reaching adulthood?”) or perhaps they might be interested in measuring quality of love across cultures or time periods, or even establishing “what love looks like” using the mother/child relationship as a site of exploration. All of these make good research questions because we can use observable data to answer them.

What is Qualitative Research?

“All we know is how to learn. How to study, how to listen, how to talk, how to tell. If we don’t tell the world, we don’t know the world. We’re lost in it, we die.” -Ursula LeGuin, The Telling

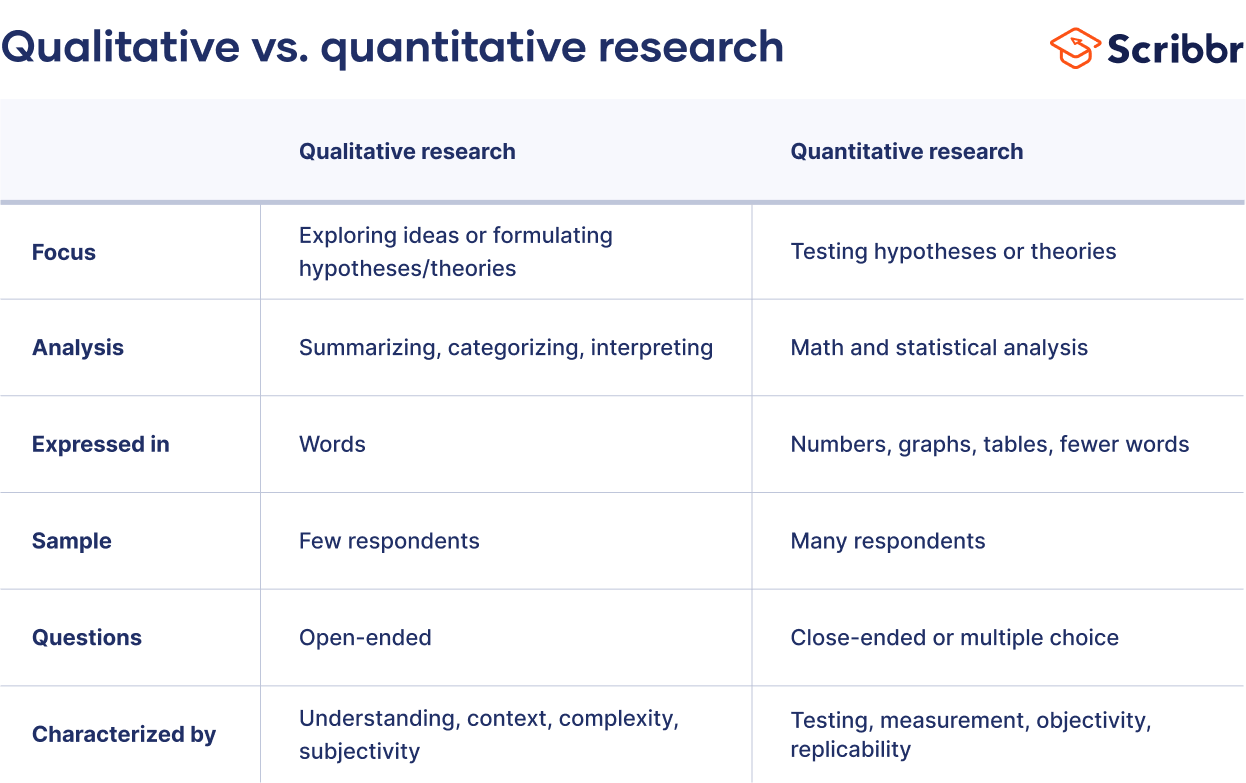

At its simplest, qualitative research is research about the social world that does not use numbers in its analyses. All those who fear statistics can breathe a sigh of relief – there are no mathematical formulae or regression models in this book! But this definition is less about what qualitative research can be and more about what it is not. To be honest, any simple statement will fail to capture the power and depth of qualitative research. One way of contrasting qualitative research to quantitative research is to note that the focus of qualitative research is less about explaining and predicting relationships between variables and more about understanding the social world. To use our mother love example, the question about “what love looks like” is a good question for the qualitative researcher while all questions measuring love or comparing incidences of love (both of which require measurement) are good questions for quantitative researchers. Patton writes,

Qualitative data describe. They take us, as readers, into the time and place of the observation so that we know what it was like to have been there. They capture and communicate someone else’s experience of the world in his or her own words. Qualitative data tell a story. ( Patton 2002:47 )

Qualitative researchers are asking different questions about the world than their quantitative colleagues. Even when researchers are employed in “mixed methods” research ( both quantitative and qualitative), they are using different methods to address different questions of the study. I do a lot of research about first-generation and working-college college students. Where a quantitative researcher might ask, how many first-generation college students graduate from college within four years? Or does first-generation college status predict high student debt loads? A qualitative researcher might ask, how does the college experience differ for first-generation college students? What is it like to carry a lot of debt, and how does this impact the ability to complete college on time? Both sets of questions are important, but they can only be answered using specific tools tailored to those questions. For the former, you need large numbers to make adequate comparisons. For the latter, you need to talk to people, find out what they are thinking and feeling, and try to inhabit their shoes for a little while so you can make sense of their experiences and beliefs.

Examples of Qualitative Research

You have probably seen examples of qualitative research before, but you might not have paid particular attention to how they were produced or realized that the accounts you were reading were the result of hours, months, even years of research “in the field.” A good qualitative researcher will present the product of their hours of work in such a way that it seems natural, even obvious, to the reader. Because we are trying to convey what it is like answers, qualitative research is often presented as stories – stories about how people live their lives, go to work, raise their children, interact with one another. In some ways, this can seem like reading particularly insightful novels. But, unlike novels, there are very specific rules and guidelines that qualitative researchers follow to ensure that the “story” they are telling is accurate , a truthful rendition of what life is like for the people being studied. Most of this textbook will be spent conveying those rules and guidelines. Let’s take a look, first, however, at three examples of what the end product looks like. I have chosen these three examples to showcase very different approaches to qualitative research, and I will return to these five examples throughout the book. They were all published as whole books (not chapters or articles), and they are worth the long read, if you have the time. I will also provide some information on how these books came to be and the length of time it takes to get them into book version. It is important you know about this process, and the rest of this textbook will help explain why it takes so long to conduct good qualitative research!

Example 1 : The End Game (ethnography + interviews)

Corey Abramson is a sociologist who teaches at the University of Arizona. In 2015 he published The End Game: How Inequality Shapes our Final Years ( 2015 ). This book was based on the research he did for his dissertation at the University of California-Berkeley in 2012. Actually, the dissertation was completed in 2012 but the work that was produced that took several years. The dissertation was entitled, “This is How We Live, This is How We Die: Social Stratification, Aging, and Health in Urban America” ( 2012 ). You can see how the book version, which was written for a more general audience, has a more engaging sound to it, but that the dissertation version, which is what academic faculty read and evaluate, has a more descriptive title. You can read the title and know that this is a study about aging and health and that the focus is going to be inequality and that the context (place) is going to be “urban America.” It’s a study about “how” people do something – in this case, how they deal with aging and death. This is the very first sentence of the dissertation, “From our first breath in the hospital to the day we die, we live in a society characterized by unequal opportunities for maintaining health and taking care of ourselves when ill. These disparities reflect persistent racial, socio-economic, and gender-based inequalities and contribute to their persistence over time” ( 1 ). What follows is a truthful account of how that is so.

Cory Abramson spent three years conducting his research in four different urban neighborhoods. We call the type of research he conducted “comparative ethnographic” because he designed his study to compare groups of seniors as they went about their everyday business. It’s comparative because he is comparing different groups (based on race, class, gender) and ethnographic because he is studying the culture/way of life of a group. [4] He had an educated guess, rooted in what previous research had shown and what social theory would suggest, that people’s experiences of aging differ by race, class, and gender. So, he set up a research design that would allow him to observe differences. He chose two primarily middle-class (one was racially diverse and the other was predominantly White) and two primarily poor neighborhoods (one was racially diverse and the other was predominantly African American). He hung out in senior centers and other places seniors congregated, watched them as they took the bus to get prescriptions filled, sat in doctor’s offices with them, and listened to their conversations with each other. He also conducted more formal conversations, what we call in-depth interviews, with sixty seniors from each of the four neighborhoods. As with a lot of fieldwork , as he got closer to the people involved, he both expanded and deepened his reach –

By the end of the project, I expanded my pool of general observations to include various settings frequented by seniors: apartment building common rooms, doctors’ offices, emergency rooms, pharmacies, senior centers, bars, parks, corner stores, shopping centers, pool halls, hair salons, coffee shops, and discount stores. Over the course of the three years of fieldwork, I observed hundreds of elders, and developed close relationships with a number of them. ( 2012:10 )

When Abramson rewrote the dissertation for a general audience and published his book in 2015, it got a lot of attention. It is a beautifully written book and it provided insight into a common human experience that we surprisingly know very little about. It won the Outstanding Publication Award by the American Sociological Association Section on Aging and the Life Course and was featured in the New York Times . The book was about aging, and specifically how inequality shapes the aging process, but it was also about much more than that. It helped show how inequality affects people’s everyday lives. For example, by observing the difficulties the poor had in setting up appointments and getting to them using public transportation and then being made to wait to see a doctor, sometimes in standing-room-only situations, when they are unwell, and then being treated dismissively by hospital staff, Abramson allowed readers to feel the material reality of being poor in the US. Comparing these examples with seniors with adequate supplemental insurance who have the resources to hire car services or have others assist them in arranging care when they need it, jolts the reader to understand and appreciate the difference money makes in the lives and circumstances of us all, and in a way that is different than simply reading a statistic (“80% of the poor do not keep regular doctor’s appointments”) does. Qualitative research can reach into spaces and places that often go unexamined and then reports back to the rest of us what it is like in those spaces and places.

Example 2: Racing for Innocence (Interviews + Content Analysis + Fictional Stories)

Jennifer Pierce is a Professor of American Studies at the University of Minnesota. Trained as a sociologist, she has written a number of books about gender, race, and power. Her very first book, Gender Trials: Emotional Lives in Contemporary Law Firms, published in 1995, is a brilliant look at gender dynamics within two law firms. Pierce was a participant observer, working as a paralegal, and she observed how female lawyers and female paralegals struggled to obtain parity with their male colleagues.

Fifteen years later, she reexamined the context of the law firm to include an examination of racial dynamics, particularly how elite white men working in these spaces created and maintained a culture that made it difficult for both female attorneys and attorneys of color to thrive. Her book, Racing for Innocence: Whiteness, Gender, and the Backlash Against Affirmative Action , published in 2012, is an interesting and creative blending of interviews with attorneys, content analyses of popular films during this period, and fictional accounts of racial discrimination and sexual harassment. The law firm she chose to study had come under an affirmative action order and was in the process of implementing equitable policies and programs. She wanted to understand how recipients of white privilege (the elite white male attorneys) come to deny the role they play in reproducing inequality. Through interviews with attorneys who were present both before and during the affirmative action order, she creates a historical record of the “bad behavior” that necessitated new policies and procedures, but also, and more importantly , probed the participants ’ understanding of this behavior. It should come as no surprise that most (but not all) of the white male attorneys saw little need for change, and that almost everyone else had accounts that were different if not sometimes downright harrowing.

I’ve used Pierce’s book in my qualitative research methods courses as an example of an interesting blend of techniques and presentation styles. My students often have a very difficult time with the fictional accounts she includes. But they serve an important communicative purpose here. They are her attempts at presenting “both sides” to an objective reality – something happens (Pierce writes this something so it is very clear what it is), and the two participants to the thing that happened have very different understandings of what this means. By including these stories, Pierce presents one of her key findings – people remember things differently and these different memories tend to support their own ideological positions. I wonder what Pierce would have written had she studied the murder of George Floyd or the storming of the US Capitol on January 6 or any number of other historic events whose observers and participants record very different happenings.

This is not to say that qualitative researchers write fictional accounts. In fact, the use of fiction in our work remains controversial. When used, it must be clearly identified as a presentation device, as Pierce did. I include Racing for Innocence here as an example of the multiple uses of methods and techniques and the way that these work together to produce better understandings by us, the readers, of what Pierce studied. We readers come away with a better grasp of how and why advantaged people understate their own involvement in situations and structures that advantage them. This is normal human behavior , in other words. This case may have been about elite white men in law firms, but the general insights here can be transposed to other settings. Indeed, Pierce argues that more research needs to be done about the role elites play in the reproduction of inequality in the workplace in general.

Example 3: Amplified Advantage (Mixed Methods: Survey Interviews + Focus Groups + Archives)

The final example comes from my own work with college students, particularly the ways in which class background affects the experience of college and outcomes for graduates. I include it here as an example of mixed methods, and for the use of supplementary archival research. I’ve done a lot of research over the years on first-generation, low-income, and working-class college students. I am curious (and skeptical) about the possibility of social mobility today, particularly with the rising cost of college and growing inequality in general. As one of the few people in my family to go to college, I didn’t grow up with a lot of examples of what college was like or how to make the most of it. And when I entered graduate school, I realized with dismay that there were very few people like me there. I worried about becoming too different from my family and friends back home. And I wasn’t at all sure that I would ever be able to pay back the huge load of debt I was taking on. And so I wrote my dissertation and first two books about working-class college students. These books focused on experiences in college and the difficulties of navigating between family and school ( Hurst 2010a, 2012 ). But even after all that research, I kept coming back to wondering if working-class students who made it through college had an equal chance at finding good jobs and happy lives,

What happens to students after college? Do working-class students fare as well as their peers? I knew from my own experience that barriers continued through graduate school and beyond, and that my debtload was higher than that of my peers, constraining some of the choices I made when I graduated. To answer these questions, I designed a study of students attending small liberal arts colleges, the type of college that tried to equalize the experience of students by requiring all students to live on campus and offering small classes with lots of interaction with faculty. These private colleges tend to have more money and resources so they can provide financial aid to low-income students. They also attract some very wealthy students. Because they enroll students across the class spectrum, I would be able to draw comparisons. I ended up spending about four years collecting data, both a survey of more than 2000 students (which formed the basis for quantitative analyses) and qualitative data collection (interviews, focus groups, archival research, and participant observation). This is what we call a “mixed methods” approach because we use both quantitative and qualitative data. The survey gave me a large enough number of students that I could make comparisons of the how many kind, and to be able to say with some authority that there were in fact significant differences in experience and outcome by class (e.g., wealthier students earned more money and had little debt; working-class students often found jobs that were not in their chosen careers and were very affected by debt, upper-middle-class students were more likely to go to graduate school). But the survey analyses could not explain why these differences existed. For that, I needed to talk to people and ask them about their motivations and aspirations. I needed to understand their perceptions of the world, and it is very hard to do this through a survey.

By interviewing students and recent graduates, I was able to discern particular patterns and pathways through college and beyond. Specifically, I identified three versions of gameplay. Upper-middle-class students, whose parents were themselves professionals (academics, lawyers, managers of non-profits), saw college as the first stage of their education and took classes and declared majors that would prepare them for graduate school. They also spent a lot of time building their resumes, taking advantage of opportunities to help professors with their research, or study abroad. This helped them gain admission to highly-ranked graduate schools and interesting jobs in the public sector. In contrast, upper-class students, whose parents were wealthy and more likely to be engaged in business (as CEOs or other high-level directors), prioritized building social capital. They did this by joining fraternities and sororities and playing club sports. This helped them when they graduated as they called on friends and parents of friends to find them well-paying jobs. Finally, low-income, first-generation, and working-class students were often adrift. They took the classes that were recommended to them but without the knowledge of how to connect them to life beyond college. They spent time working and studying rather than partying or building their resumes. All three sets of students thought they were “doing college” the right way, the way that one was supposed to do college. But these three versions of gameplay led to distinct outcomes that advantaged some students over others. I titled my work “Amplified Advantage” to highlight this process.

These three examples, Cory Abramson’s The End Game , Jennifer Peirce’s Racing for Innocence, and my own Amplified Advantage, demonstrate the range of approaches and tools available to the qualitative researcher. They also help explain why qualitative research is so important. Numbers can tell us some things about the world, but they cannot get at the hearts and minds, motivations and beliefs of the people who make up the social worlds we inhabit. For that, we need tools that allow us to listen and make sense of what people tell us and show us. That is what good qualitative research offers us.

How Is This Book Organized?

This textbook is organized as a comprehensive introduction to the use of qualitative research methods. The first half covers general topics (e.g., approaches to qualitative research, ethics) and research design (necessary steps for building a successful qualitative research study). The second half reviews various data collection and data analysis techniques. Of course, building a successful qualitative research study requires some knowledge of data collection and data analysis so the chapters in the first half and the chapters in the second half should be read in conversation with each other. That said, each chapter can be read on its own for assistance with a particular narrow topic. In addition to the chapters, a helpful glossary can be found in the back of the book. Rummage around in the text as needed.

Chapter Descriptions

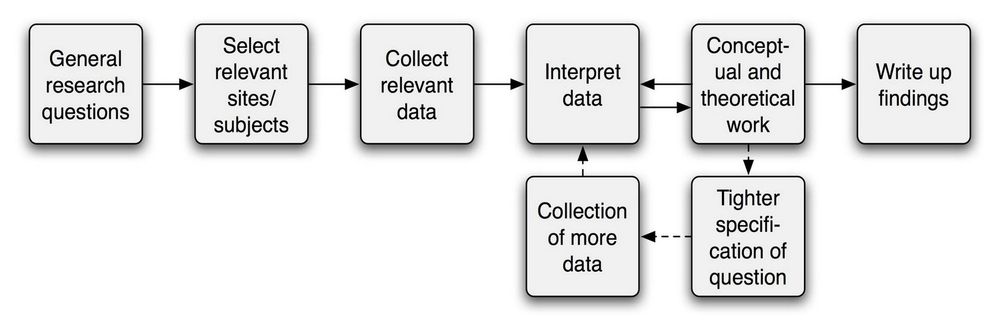

Chapter 2 provides an overview of the Research Design Process. How does one begin a study? What is an appropriate research question? How is the study to be done – with what methods ? Involving what people and sites? Although qualitative research studies can and often do change and develop over the course of data collection, it is important to have a good idea of what the aims and goals of your study are at the outset and a good plan of how to achieve those aims and goals. Chapter 2 provides a road map of the process.

Chapter 3 describes and explains various ways of knowing the (social) world. What is it possible for us to know about how other people think or why they behave the way they do? What does it mean to say something is a “fact” or that it is “well-known” and understood? Qualitative researchers are particularly interested in these questions because of the types of research questions we are interested in answering (the how questions rather than the how many questions of quantitative research). Qualitative researchers have adopted various epistemological approaches. Chapter 3 will explore these approaches, highlighting interpretivist approaches that acknowledge the subjective aspect of reality – in other words, reality and knowledge are not objective but rather influenced by (interpreted through) people.

Chapter 4 focuses on the practical matter of developing a research question and finding the right approach to data collection. In any given study (think of Cory Abramson’s study of aging, for example), there may be years of collected data, thousands of observations , hundreds of pages of notes to read and review and make sense of. If all you had was a general interest area (“aging”), it would be very difficult, nearly impossible, to make sense of all of that data. The research question provides a helpful lens to refine and clarify (and simplify) everything you find and collect. For that reason, it is important to pull out that lens (articulate the research question) before you get started. In the case of the aging study, Cory Abramson was interested in how inequalities affected understandings and responses to aging. It is for this reason he designed a study that would allow him to compare different groups of seniors (some middle-class, some poor). Inevitably, he saw much more in the three years in the field than what made it into his book (or dissertation), but he was able to narrow down the complexity of the social world to provide us with this rich account linked to the original research question. Developing a good research question is thus crucial to effective design and a successful outcome. Chapter 4 will provide pointers on how to do this. Chapter 4 also provides an overview of general approaches taken to doing qualitative research and various “traditions of inquiry.”

Chapter 5 explores sampling . After you have developed a research question and have a general idea of how you will collect data (Observations? Interviews?), how do you go about actually finding people and sites to study? Although there is no “correct number” of people to interview , the sample should follow the research question and research design. Unlike quantitative research, qualitative research involves nonprobability sampling. Chapter 5 explains why this is so and what qualities instead make a good sample for qualitative research.

Chapter 6 addresses the importance of reflexivity in qualitative research. Related to epistemological issues of how we know anything about the social world, qualitative researchers understand that we the researchers can never be truly neutral or outside the study we are conducting. As observers, we see things that make sense to us and may entirely miss what is either too obvious to note or too different to comprehend. As interviewers, as much as we would like to ask questions neutrally and remain in the background, interviews are a form of conversation, and the persons we interview are responding to us . Therefore, it is important to reflect upon our social positions and the knowledges and expectations we bring to our work and to work through any blind spots that we may have. Chapter 6 provides some examples of reflexivity in practice and exercises for thinking through one’s own biases.

Chapter 7 is a very important chapter and should not be overlooked. As a practical matter, it should also be read closely with chapters 6 and 8. Because qualitative researchers deal with people and the social world, it is imperative they develop and adhere to a strong ethical code for conducting research in a way that does not harm. There are legal requirements and guidelines for doing so (see chapter 8), but these requirements should not be considered synonymous with the ethical code required of us. Each researcher must constantly interrogate every aspect of their research, from research question to design to sample through analysis and presentation, to ensure that a minimum of harm (ideally, zero harm) is caused. Because each research project is unique, the standards of care for each study are unique. Part of being a professional researcher is carrying this code in one’s heart, being constantly attentive to what is required under particular circumstances. Chapter 7 provides various research scenarios and asks readers to weigh in on the suitability and appropriateness of the research. If done in a class setting, it will become obvious fairly quickly that there are often no absolutely correct answers, as different people find different aspects of the scenarios of greatest importance. Minimizing the harm in one area may require possible harm in another. Being attentive to all the ethical aspects of one’s research and making the best judgments one can, clearly and consciously, is an integral part of being a good researcher.

Chapter 8 , best to be read in conjunction with chapter 7, explains the role and importance of Institutional Review Boards (IRBs) . Under federal guidelines, an IRB is an appropriately constituted group that has been formally designated to review and monitor research involving human subjects . Every institution that receives funding from the federal government has an IRB. IRBs have the authority to approve, require modifications to (to secure approval), or disapprove research. This group review serves an important role in the protection of the rights and welfare of human research subjects. Chapter 8 reviews the history of IRBs and the work they do but also argues that IRBs’ review of qualitative research is often both over-inclusive and under-inclusive. Some aspects of qualitative research are not well understood by IRBs, given that they were developed to prevent abuses in biomedical research. Thus, it is important not to rely on IRBs to identify all the potential ethical issues that emerge in our research (see chapter 7).

Chapter 9 provides help for getting started on formulating a research question based on gaps in the pre-existing literature. Research is conducted as part of a community, even if particular studies are done by single individuals (or small teams). What any of us finds and reports back becomes part of a much larger body of knowledge. Thus, it is important that we look at the larger body of knowledge before we actually start our bit to see how we can best contribute. When I first began interviewing working-class college students, there was only one other similar study I could find, and it hadn’t been published (it was a dissertation of students from poor backgrounds). But there had been a lot published by professors who had grown up working class and made it through college despite the odds. These accounts by “working-class academics” became an important inspiration for my study and helped me frame the questions I asked the students I interviewed. Chapter 9 will provide some pointers on how to search for relevant literature and how to use this to refine your research question.

Chapter 10 serves as a bridge between the two parts of the textbook, by introducing techniques of data collection. Qualitative research is often characterized by the form of data collection – for example, an ethnographic study is one that employs primarily observational data collection for the purpose of documenting and presenting a particular culture or ethnos. Techniques can be effectively combined, depending on the research question and the aims and goals of the study. Chapter 10 provides a general overview of all the various techniques and how they can be combined.

The second part of the textbook moves into the doing part of qualitative research once the research question has been articulated and the study designed. Chapters 11 through 17 cover various data collection techniques and approaches. Chapters 18 and 19 provide a very simple overview of basic data analysis. Chapter 20 covers communication of the data to various audiences, and in various formats.

Chapter 11 begins our overview of data collection techniques with a focus on interviewing , the true heart of qualitative research. This technique can serve as the primary and exclusive form of data collection, or it can be used to supplement other forms (observation, archival). An interview is distinct from a survey, where questions are asked in a specific order and often with a range of predetermined responses available. Interviews can be conversational and unstructured or, more conventionally, semistructured , where a general set of interview questions “guides” the conversation. Chapter 11 covers the basics of interviews: how to create interview guides, how many people to interview, where to conduct the interview, what to watch out for (how to prepare against things going wrong), and how to get the most out of your interviews.

Chapter 12 covers an important variant of interviewing, the focus group. Focus groups are semistructured interviews with a group of people moderated by a facilitator (the researcher or researcher’s assistant). Focus groups explicitly use group interaction to assist in the data collection. They are best used to collect data on a specific topic that is non-personal and shared among the group. For example, asking a group of college students about a common experience such as taking classes by remote delivery during the pandemic year of 2020. Chapter 12 covers the basics of focus groups: when to use them, how to create interview guides for them, and how to run them effectively.

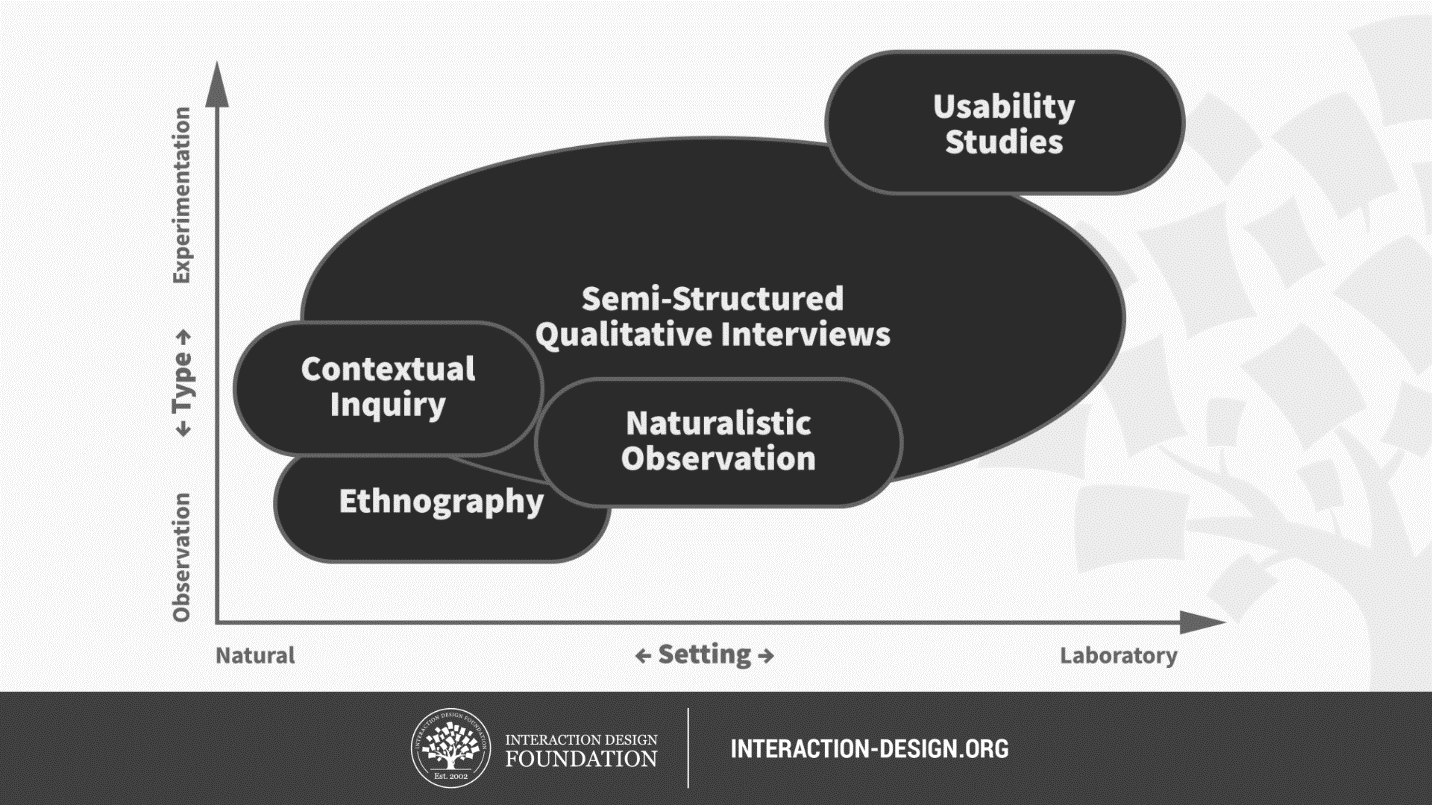

Chapter 13 moves away from interviewing to the second major form of data collection unique to qualitative researchers – observation . Qualitative research that employs observation can best be understood as falling on a continuum of “fly on the wall” observation (e.g., observing how strangers interact in a doctor’s waiting room) to “participant” observation, where the researcher is also an active participant of the activity being observed. For example, an activist in the Black Lives Matter movement might want to study the movement, using her inside position to gain access to observe key meetings and interactions. Chapter 13 covers the basics of participant observation studies: advantages and disadvantages, gaining access, ethical concerns related to insider/outsider status and entanglement, and recording techniques.

Chapter 14 takes a closer look at “deep ethnography” – immersion in the field of a particularly long duration for the purpose of gaining a deeper understanding and appreciation of a particular culture or social world. Clifford Geertz called this “deep hanging out.” Whereas participant observation is often combined with semistructured interview techniques, deep ethnography’s commitment to “living the life” or experiencing the situation as it really is demands more conversational and natural interactions with people. These interactions and conversations may take place over months or even years. As can be expected, there are some costs to this technique, as well as some very large rewards when done competently. Chapter 14 provides some examples of deep ethnographies that will inspire some beginning researchers and intimidate others.

Chapter 15 moves in the opposite direction of deep ethnography, a technique that is the least positivist of all those discussed here, to mixed methods , a set of techniques that is arguably the most positivist . A mixed methods approach combines both qualitative data collection and quantitative data collection, commonly by combining a survey that is analyzed statistically (e.g., cross-tabs or regression analyses of large number probability samples) with semi-structured interviews. Although it is somewhat unconventional to discuss mixed methods in textbooks on qualitative research, I think it is important to recognize this often-employed approach here. There are several advantages and some disadvantages to taking this route. Chapter 16 will describe those advantages and disadvantages and provide some particular guidance on how to design a mixed methods study for maximum effectiveness.

Chapter 16 covers data collection that does not involve live human subjects at all – archival and historical research (chapter 17 will also cover data that does not involve interacting with human subjects). Sometimes people are unavailable to us, either because they do not wish to be interviewed or observed (as is the case with many “elites”) or because they are too far away, in both place and time. Fortunately, humans leave many traces and we can often answer questions we have by examining those traces. Special collections and archives can be goldmines for social science research. This chapter will explain how to access these places, for what purposes, and how to begin to make sense of what you find.

Chapter 17 covers another data collection area that does not involve face-to-face interaction with humans: content analysis . Although content analysis may be understood more properly as a data analysis technique, the term is often used for the entire approach, which will be the case here. Content analysis involves interpreting meaning from a body of text. This body of text might be something found in historical records (see chapter 16) or something collected by the researcher, as in the case of comment posts on a popular blog post. I once used the stories told by student loan debtors on the website studentloanjustice.org as the content I analyzed. Content analysis is particularly useful when attempting to define and understand prevalent stories or communication about a topic of interest. In other words, when we are less interested in what particular people (our defined sample) are doing or believing and more interested in what general narratives exist about a particular topic or issue. This chapter will explore different approaches to content analysis and provide helpful tips on how to collect data, how to turn that data into codes for analysis, and how to go about presenting what is found through analysis.

Where chapter 17 has pushed us towards data analysis, chapters 18 and 19 are all about what to do with the data collected, whether that data be in the form of interview transcripts or fieldnotes from observations. Chapter 18 introduces the basics of coding , the iterative process of assigning meaning to the data in order to both simplify and identify patterns. What is a code and how does it work? What are the different ways of coding data, and when should you use them? What is a codebook, and why do you need one? What does the process of data analysis look like?

Chapter 19 goes further into detail on codes and how to use them, particularly the later stages of coding in which our codes are refined, simplified, combined, and organized. These later rounds of coding are essential to getting the most out of the data we’ve collected. As students are often overwhelmed with the amount of data (a corpus of interview transcripts typically runs into the hundreds of pages; fieldnotes can easily top that), this chapter will also address time management and provide suggestions for dealing with chaos and reminders that feeling overwhelmed at the analysis stage is part of the process. By the end of the chapter, you should understand how “findings” are actually found.

The book concludes with a chapter dedicated to the effective presentation of data results. Chapter 20 covers the many ways that researchers communicate their studies to various audiences (academic, personal, political), what elements must be included in these various publications, and the hallmarks of excellent qualitative research that various audiences will be expecting. Because qualitative researchers are motivated by understanding and conveying meaning , effective communication is not only an essential skill but a fundamental facet of the entire research project. Ethnographers must be able to convey a certain sense of verisimilitude , the appearance of true reality. Those employing interviews must faithfully depict the key meanings of the people they interviewed in a way that rings true to those people, even if the end result surprises them. And all researchers must strive for clarity in their publications so that various audiences can understand what was found and why it is important.

The book concludes with a short chapter ( chapter 21 ) discussing the value of qualitative research. At the very end of this book, you will find a glossary of terms. I recommend you make frequent use of the glossary and add to each entry as you find examples. Although the entries are meant to be simple and clear, you may also want to paraphrase the definition—make it “make sense” to you, in other words. In addition to the standard reference list (all works cited here), you will find various recommendations for further reading at the end of many chapters. Some of these recommendations will be examples of excellent qualitative research, indicated with an asterisk (*) at the end of the entry. As they say, a picture is worth a thousand words. A good example of qualitative research can teach you more about conducting research than any textbook can (this one included). I highly recommend you select one to three examples from these lists and read them along with the textbook.

A final note on the choice of examples – you will note that many of the examples used in the text come from research on college students. This is for two reasons. First, as most of my research falls in this area, I am most familiar with this literature and have contacts with those who do research here and can call upon them to share their stories with you. Second, and more importantly, my hope is that this textbook reaches a wide audience of beginning researchers who study widely and deeply across the range of what can be known about the social world (from marine resources management to public policy to nursing to political science to sexuality studies and beyond). It is sometimes difficult to find examples that speak to all those research interests, however. A focus on college students is something that all readers can understand and, hopefully, appreciate, as we are all now or have been at some point a college student.

Recommended Reading: Other Qualitative Research Textbooks

I’ve included a brief list of some of my favorite qualitative research textbooks and guidebooks if you need more than what you will find in this introductory text. For each, I’ve also indicated if these are for “beginning” or “advanced” (graduate-level) readers. Many of these books have several editions that do not significantly vary; the edition recommended is merely the edition I have used in teaching and to whose page numbers any specific references made in the text agree.

Barbour, Rosaline. 2014. Introducing Qualitative Research: A Student’s Guide. Thousand Oaks, CA: SAGE. A good introduction to qualitative research, with abundant examples (often from the discipline of health care) and clear definitions. Includes quick summaries at the ends of each chapter. However, some US students might find the British context distracting and can be a bit advanced in some places. Beginning .

Bloomberg, Linda Dale, and Marie F. Volpe. 2012. Completing Your Qualitative Dissertation . 2nd ed. Thousand Oaks, CA: SAGE. Specifically designed to guide graduate students through the research process. Advanced .

Creswell, John W., and Cheryl Poth. 2018 Qualitative Inquiry and Research Design: Choosing among Five Traditions . 4th ed. Thousand Oaks, CA: SAGE. This is a classic and one of the go-to books I used myself as a graduate student. One of the best things about this text is its clear presentation of five distinct traditions in qualitative research. Despite the title, this reasonably sized book is about more than research design, including both data analysis and how to write about qualitative research. Advanced .

Lareau, Annette. 2021. Listening to People: A Practical Guide to Interviewing, Participant Observation, Data Analysis, and Writing It All Up . Chicago: University of Chicago Press. A readable and personal account of conducting qualitative research by an eminent sociologist, with a heavy emphasis on the kinds of participant-observation research conducted by the author. Despite its reader-friendliness, this is really a book targeted to graduate students learning the craft. Advanced .

Lune, Howard, and Bruce L. Berg. 2018. 9th edition. Qualitative Research Methods for the Social Sciences. Pearson . Although a good introduction to qualitative methods, the authors favor symbolic interactionist and dramaturgical approaches, which limits the appeal primarily to sociologists. Beginning .

Marshall, Catherine, and Gretchen B. Rossman. 2016. 6th edition. Designing Qualitative Research. Thousand Oaks, CA: SAGE. Very readable and accessible guide to research design by two educational scholars. Although the presentation is sometimes fairly dry, personal vignettes and illustrations enliven the text. Beginning .

Maxwell, Joseph A. 2013. Qualitative Research Design: An Interactive Approach . 3rd ed. Thousand Oaks, CA: SAGE. A short and accessible introduction to qualitative research design, particularly helpful for graduate students contemplating theses and dissertations. This has been a standard textbook in my graduate-level courses for years. Advanced .

Patton, Michael Quinn. 2002. Qualitative Research and Evaluation Methods . Thousand Oaks, CA: SAGE. This is a comprehensive text that served as my “go-to” reference when I was a graduate student. It is particularly helpful for those involved in program evaluation and other forms of evaluation studies and uses examples from a wide range of disciplines. Advanced .

Rubin, Ashley T. 2021. Rocking Qualitative Social Science: An Irreverent Guide to Rigorous Research. Stanford : Stanford University Press. A delightful and personal read. Rubin uses rock climbing as an extended metaphor for learning how to conduct qualitative research. A bit slanted toward ethnographic and archival methods of data collection, with frequent examples from her own studies in criminology. Beginning .

Weis, Lois, and Michelle Fine. 2000. Speed Bumps: A Student-Friendly Guide to Qualitative Research . New York: Teachers College Press. Readable and accessibly written in a quasi-conversational style. Particularly strong in its discussion of ethical issues throughout the qualitative research process. Not comprehensive, however, and very much tied to ethnographic research. Although designed for graduate students, this is a recommended read for students of all levels. Beginning .

Patton’s Ten Suggestions for Doing Qualitative Research

The following ten suggestions were made by Michael Quinn Patton in his massive textbooks Qualitative Research and Evaluations Methods . This book is highly recommended for those of you who want more than an introduction to qualitative methods. It is the book I relied on heavily when I was a graduate student, although it is much easier to “dip into” when necessary than to read through as a whole. Patton is asked for “just one bit of advice” for a graduate student considering using qualitative research methods for their dissertation. Here are his top ten responses, in short form, heavily paraphrased, and with additional comments and emphases from me:

- Make sure that a qualitative approach fits the research question. The following are the kinds of questions that call out for qualitative methods or where qualitative methods are particularly appropriate: questions about people’s experiences or how they make sense of those experiences; studying a person in their natural environment; researching a phenomenon so unknown that it would be impossible to study it with standardized instruments or other forms of quantitative data collection.

- Study qualitative research by going to the original sources for the design and analysis appropriate to the particular approach you want to take (e.g., read Glaser and Straus if you are using grounded theory )

- Find a dissertation adviser who understands or at least who will support your use of qualitative research methods. You are asking for trouble if your entire committee is populated by quantitative researchers, even if they are all very knowledgeable about the subject or focus of your study (maybe even more so if they are!)

- Really work on design. Doing qualitative research effectively takes a lot of planning. Even if things are more flexible than in quantitative research, a good design is absolutely essential when starting out.

- Practice data collection techniques, particularly interviewing and observing. There is definitely a set of learned skills here! Do not expect your first interview to be perfect. You will continue to grow as a researcher the more interviews you conduct, and you will probably come to understand yourself a bit more in the process, too. This is not easy, despite what others who don’t work with qualitative methods may assume (and tell you!)

- Have a plan for analysis before you begin data collection. This is often a requirement in IRB protocols , although you can get away with writing something fairly simple. And even if you are taking an approach, such as grounded theory, that pushes you to remain fairly open-minded during the data collection process, you still want to know what you will be doing with all the data collected – creating a codebook? Writing analytical memos? Comparing cases? Having a plan in hand will also help prevent you from collecting too much extraneous data.

- Be prepared to confront controversies both within the qualitative research community and between qualitative research and quantitative research. Don’t be naïve about this – qualitative research, particularly some approaches, will be derided by many more “positivist” researchers and audiences. For example, is an “n” of 1 really sufficient? Yes! But not everyone will agree.

- Do not make the mistake of using qualitative research methods because someone told you it was easier, or because you are intimidated by the math required of statistical analyses. Qualitative research is difficult in its own way (and many would claim much more time-consuming than quantitative research). Do it because you are convinced it is right for your goals, aims, and research questions.

- Find a good support network. This could be a research mentor, or it could be a group of friends or colleagues who are also using qualitative research, or it could be just someone who will listen to you work through all of the issues you will confront out in the field and during the writing process. Even though qualitative research often involves human subjects, it can be pretty lonely. A lot of times you will feel like you are working without a net. You have to create one for yourself. Take care of yourself.

- And, finally, in the words of Patton, “Prepare to be changed. Looking deeply at other people’s lives will force you to look deeply at yourself.”

- We will actually spend an entire chapter ( chapter 3 ) looking at this question in much more detail! ↵

- Note that this might have been news to Europeans at the time, but many other societies around the world had also come to this conclusion through observation. There is often a tendency to equate “the scientific revolution” with the European world in which it took place, but this is somewhat misleading. ↵

- Historians are a special case here. Historians have scrupulously and rigorously investigated the social world, but not for the purpose of understanding general laws about how things work, which is the point of scientific empirical research. History is often referred to as an idiographic field of study, meaning that it studies things that happened or are happening in themselves and not for general observations or conclusions. ↵

- Don’t worry, we’ll spend more time later in this book unpacking the meaning of ethnography and other terms that are important here. Note the available glossary ↵

An approach to research that is “multimethod in focus, involving an interpretative, naturalistic approach to its subject matter. This means that qualitative researchers study things in their natural settings, attempting to make sense of, or interpret, phenomena in terms of the meanings people bring to them. Qualitative research involves the studied use and collection of a variety of empirical materials – case study, personal experience, introspective, life story, interview, observational, historical, interactional, and visual texts – that describe routine and problematic moments and meanings in individuals’ lives." ( Denzin and Lincoln 2005:2 ). Contrast with quantitative research .

In contrast to methodology, methods are more simply the practices and tools used to collect and analyze data. Examples of common methods in qualitative research are interviews , observations , and documentary analysis . One’s methodology should connect to one’s choice of methods, of course, but they are distinguishable terms. See also methodology .

A proposed explanation for an observation, phenomenon, or scientific problem that can be tested by further investigation. The positing of a hypothesis is often the first step in quantitative research but not in qualitative research. Even when qualitative researchers offer possible explanations in advance of conducting research, they will tend to not use the word “hypothesis” as it conjures up the kind of positivist research they are not conducting.

The foundational question to be addressed by the research study. This will form the anchor of the research design, collection, and analysis. Note that in qualitative research, the research question may, and probably will, alter or develop during the course of the research.

An approach to research that collects and analyzes numerical data for the purpose of finding patterns and averages, making predictions, testing causal relationships, and generalizing results to wider populations. Contrast with qualitative research .

Data collection that takes place in real-world settings, referred to as “the field;” a key component of much Grounded Theory and ethnographic research. Patton ( 2002 ) calls fieldwork “the central activity of qualitative inquiry” where “‘going into the field’ means having direct and personal contact with people under study in their own environments – getting close to people and situations being studied to personally understand the realities of minutiae of daily life” (48).

The people who are the subjects of a qualitative study. In interview-based studies, they may be the respondents to the interviewer; for purposes of IRBs, they are often referred to as the human subjects of the research.

The branch of philosophy concerned with knowledge. For researchers, it is important to recognize and adopt one of the many distinguishing epistemological perspectives as part of our understanding of what questions research can address or fully answer. See, e.g., constructivism , subjectivism, and objectivism .

An approach that refutes the possibility of neutrality in social science research. All research is “guided by a set of beliefs and feelings about the world and how it should be understood and studied” (Denzin and Lincoln 2005: 13). In contrast to positivism , interpretivism recognizes the social constructedness of reality, and researchers adopting this approach focus on capturing interpretations and understandings people have about the world rather than “the world” as it is (which is a chimera).

The cluster of data-collection tools and techniques that involve observing interactions between people, the behaviors, and practices of individuals (sometimes in contrast to what they say about how they act and behave), and cultures in context. Observational methods are the key tools employed by ethnographers and Grounded Theory .

Research based on data collected and analyzed by the research (in contrast to secondary “library” research).

The process of selecting people or other units of analysis to represent a larger population. In quantitative research, this representation is taken quite literally, as statistically representative. In qualitative research, in contrast, sample selection is often made based on potential to generate insight about a particular topic or phenomenon.

A method of data collection in which the researcher asks the participant questions; the answers to these questions are often recorded and transcribed verbatim. There are many different kinds of interviews - see also semistructured interview , structured interview , and unstructured interview .

The specific group of individuals that you will collect data from. Contrast population.

The practice of being conscious of and reflective upon one’s own social location and presence when conducting research. Because qualitative research often requires interaction with live humans, failing to take into account how one’s presence and prior expectations and social location affect the data collected and how analyzed may limit the reliability of the findings. This remains true even when dealing with historical archives and other content. Who we are matters when asking questions about how people experience the world because we, too, are a part of that world.

The science and practice of right conduct; in research, it is also the delineation of moral obligations towards research participants, communities to which we belong, and communities in which we conduct our research.

An administrative body established to protect the rights and welfare of human research subjects recruited to participate in research activities conducted under the auspices of the institution with which it is affiliated. The IRB is charged with the responsibility of reviewing all research involving human participants. The IRB is concerned with protecting the welfare, rights, and privacy of human subjects. The IRB has the authority to approve, disapprove, monitor, and require modifications in all research activities that fall within its jurisdiction as specified by both the federal regulations and institutional policy.

Research, according to US federal guidelines, that involves “a living individual about whom an investigator (whether professional or student) conducting research: (1) Obtains information or biospecimens through intervention or interaction with the individual, and uses, studies, or analyzes the information or biospecimens; or (2) Obtains, uses, studies, analyzes, or generates identifiable private information or identifiable biospecimens.”

One of the primary methodological traditions of inquiry in qualitative research, ethnography is the study of a group or group culture, largely through observational fieldwork supplemented by interviews. It is a form of fieldwork that may include participant-observation data collection. See chapter 14 for a discussion of deep ethnography.

A form of interview that follows a standard guide of questions asked, although the order of the questions may change to match the particular needs of each individual interview subject, and probing “follow-up” questions are often added during the course of the interview. The semi-structured interview is the primary form of interviewing used by qualitative researchers in the social sciences. It is sometimes referred to as an “in-depth” interview. See also interview and interview guide .

A method of observational data collection taking place in a natural setting; a form of fieldwork . The term encompasses a continuum of relative participation by the researcher (from full participant to “fly-on-the-wall” observer). This is also sometimes referred to as ethnography , although the latter is characterized by a greater focus on the culture under observation.

A research design that employs both quantitative and qualitative methods, as in the case of a survey supplemented by interviews.

An epistemological perspective that posits the existence of reality through sensory experience similar to empiricism but goes further in denying any non-sensory basis of thought or consciousness. In the social sciences, the term has roots in the proto-sociologist August Comte, who believed he could discern “laws” of society similar to the laws of natural science (e.g., gravity). The term has come to mean the kinds of measurable and verifiable science conducted by quantitative researchers and is thus used pejoratively by some qualitative researchers interested in interpretation, consciousness, and human understanding. Calling someone a “positivist” is often intended as an insult. See also empiricism and objectivism.

A place or collection containing records, documents, or other materials of historical interest; most universities have an archive of material related to the university’s history, as well as other “special collections” that may be of interest to members of the community.

A method of both data collection and data analysis in which a given content (textual, visual, graphic) is examined systematically and rigorously to identify meanings, themes, patterns and assumptions. Qualitative content analysis (QCA) is concerned with gathering and interpreting an existing body of material.

A word or short phrase that symbolically assigns a summative, salient, essence-capturing, and/or evocative attribute for a portion of language-based or visual data (Saldaña 2021:5).

Usually a verbatim written record of an interview or focus group discussion.

The primary form of data for fieldwork , participant observation , and ethnography . These notes, taken by the researcher either during the course of fieldwork or at day’s end, should include as many details as possible on what was observed and what was said. They should include clear identifiers of date, time, setting, and names (or identifying characteristics) of participants.

The process of labeling and organizing qualitative data to identify different themes and the relationships between them; a way of simplifying data to allow better management and retrieval of key themes and illustrative passages. See coding frame and codebook.

A methodological tradition of inquiry and approach to analyzing qualitative data in which theories emerge from a rigorous and systematic process of induction. This approach was pioneered by the sociologists Glaser and Strauss (1967). The elements of theory generated from comparative analysis of data are, first, conceptual categories and their properties and, second, hypotheses or generalized relations among the categories and their properties – “The constant comparing of many groups draws the [researcher’s] attention to their many similarities and differences. Considering these leads [the researcher] to generate abstract categories and their properties, which, since they emerge from the data, will clearly be important to a theory explaining the kind of behavior under observation.” (36).

A detailed description of any proposed research that involves human subjects for review by IRB. The protocol serves as the recipe for the conduct of the research activity. It includes the scientific rationale to justify the conduct of the study, the information necessary to conduct the study, the plan for managing and analyzing the data, and a discussion of the research ethical issues relevant to the research. Protocols for qualitative research often include interview guides, all documents related to recruitment, informed consent forms, very clear guidelines on the safekeeping of materials collected, and plans for de-identifying transcripts or other data that include personal identifying information.

Introduction to Qualitative Research Methods Copyright © 2023 by Allison Hurst is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License , except where otherwise noted.

Qualitative Research : Definition

Qualitative research is the naturalistic study of social meanings and processes, using interviews, observations, and the analysis of texts and images. In contrast to quantitative researchers, whose statistical methods enable broad generalizations about populations (for example, comparisons of the percentages of U.S. demographic groups who vote in particular ways), qualitative researchers use in-depth studies of the social world to analyze how and why groups think and act in particular ways (for instance, case studies of the experiences that shape political views).

Events and Workshops

- Introduction to NVivo Have you just collected your data and wondered what to do next? Come join us for an introductory session on utilizing NVivo to support your analytical process. This session will only cover features of the software and how to import your records. Please feel free to attend any of the following sessions below: April 25th, 2024 12:30 pm - 1:45 pm Green Library - SVA Conference Room 125 May 9th, 2024 12:30 pm - 1:45 pm Green Library - SVA Conference Room 125

- Next: Choose an approach >>

- Choose an approach

- Find studies

- Learn methods

- Getting Started

- Get software

- Get data for secondary analysis

- Network with researchers

- Last Updated: May 23, 2024 1:27 PM

- URL: https://guides.library.stanford.edu/qualitative_research

- Privacy Policy

Home » Qualitative Research – Methods, Analysis Types and Guide

Qualitative Research – Methods, Analysis Types and Guide

Table of Contents

Qualitative Research

Qualitative research is a type of research methodology that focuses on exploring and understanding people’s beliefs, attitudes, behaviors, and experiences through the collection and analysis of non-numerical data. It seeks to answer research questions through the examination of subjective data, such as interviews, focus groups, observations, and textual analysis.

Qualitative research aims to uncover the meaning and significance of social phenomena, and it typically involves a more flexible and iterative approach to data collection and analysis compared to quantitative research. Qualitative research is often used in fields such as sociology, anthropology, psychology, and education.

Qualitative Research Methods

Qualitative Research Methods are as follows:

One-to-One Interview

This method involves conducting an interview with a single participant to gain a detailed understanding of their experiences, attitudes, and beliefs. One-to-one interviews can be conducted in-person, over the phone, or through video conferencing. The interviewer typically uses open-ended questions to encourage the participant to share their thoughts and feelings. One-to-one interviews are useful for gaining detailed insights into individual experiences.

Focus Groups

This method involves bringing together a group of people to discuss a specific topic in a structured setting. The focus group is led by a moderator who guides the discussion and encourages participants to share their thoughts and opinions. Focus groups are useful for generating ideas and insights, exploring social norms and attitudes, and understanding group dynamics.

Ethnographic Studies

This method involves immersing oneself in a culture or community to gain a deep understanding of its norms, beliefs, and practices. Ethnographic studies typically involve long-term fieldwork and observation, as well as interviews and document analysis. Ethnographic studies are useful for understanding the cultural context of social phenomena and for gaining a holistic understanding of complex social processes.

Text Analysis

This method involves analyzing written or spoken language to identify patterns and themes. Text analysis can be quantitative or qualitative. Qualitative text analysis involves close reading and interpretation of texts to identify recurring themes, concepts, and patterns. Text analysis is useful for understanding media messages, public discourse, and cultural trends.

This method involves an in-depth examination of a single person, group, or event to gain an understanding of complex phenomena. Case studies typically involve a combination of data collection methods, such as interviews, observations, and document analysis, to provide a comprehensive understanding of the case. Case studies are useful for exploring unique or rare cases, and for generating hypotheses for further research.

Process of Observation

This method involves systematically observing and recording behaviors and interactions in natural settings. The observer may take notes, use audio or video recordings, or use other methods to document what they see. Process of observation is useful for understanding social interactions, cultural practices, and the context in which behaviors occur.

Record Keeping

This method involves keeping detailed records of observations, interviews, and other data collected during the research process. Record keeping is essential for ensuring the accuracy and reliability of the data, and for providing a basis for analysis and interpretation.

This method involves collecting data from a large sample of participants through a structured questionnaire. Surveys can be conducted in person, over the phone, through mail, or online. Surveys are useful for collecting data on attitudes, beliefs, and behaviors, and for identifying patterns and trends in a population.

Qualitative data analysis is a process of turning unstructured data into meaningful insights. It involves extracting and organizing information from sources like interviews, focus groups, and surveys. The goal is to understand people’s attitudes, behaviors, and motivations

Qualitative Research Analysis Methods

Qualitative Research analysis methods involve a systematic approach to interpreting and making sense of the data collected in qualitative research. Here are some common qualitative data analysis methods:

Thematic Analysis

This method involves identifying patterns or themes in the data that are relevant to the research question. The researcher reviews the data, identifies keywords or phrases, and groups them into categories or themes. Thematic analysis is useful for identifying patterns across multiple data sources and for generating new insights into the research topic.

Content Analysis

This method involves analyzing the content of written or spoken language to identify key themes or concepts. Content analysis can be quantitative or qualitative. Qualitative content analysis involves close reading and interpretation of texts to identify recurring themes, concepts, and patterns. Content analysis is useful for identifying patterns in media messages, public discourse, and cultural trends.

Discourse Analysis

This method involves analyzing language to understand how it constructs meaning and shapes social interactions. Discourse analysis can involve a variety of methods, such as conversation analysis, critical discourse analysis, and narrative analysis. Discourse analysis is useful for understanding how language shapes social interactions, cultural norms, and power relationships.

Grounded Theory Analysis

This method involves developing a theory or explanation based on the data collected. Grounded theory analysis starts with the data and uses an iterative process of coding and analysis to identify patterns and themes in the data. The theory or explanation that emerges is grounded in the data, rather than preconceived hypotheses. Grounded theory analysis is useful for understanding complex social phenomena and for generating new theoretical insights.

Narrative Analysis

This method involves analyzing the stories or narratives that participants share to gain insights into their experiences, attitudes, and beliefs. Narrative analysis can involve a variety of methods, such as structural analysis, thematic analysis, and discourse analysis. Narrative analysis is useful for understanding how individuals construct their identities, make sense of their experiences, and communicate their values and beliefs.

Phenomenological Analysis

This method involves analyzing how individuals make sense of their experiences and the meanings they attach to them. Phenomenological analysis typically involves in-depth interviews with participants to explore their experiences in detail. Phenomenological analysis is useful for understanding subjective experiences and for developing a rich understanding of human consciousness.

Comparative Analysis

This method involves comparing and contrasting data across different cases or groups to identify similarities and differences. Comparative analysis can be used to identify patterns or themes that are common across multiple cases, as well as to identify unique or distinctive features of individual cases. Comparative analysis is useful for understanding how social phenomena vary across different contexts and groups.

Applications of Qualitative Research

Qualitative research has many applications across different fields and industries. Here are some examples of how qualitative research is used:

- Market Research: Qualitative research is often used in market research to understand consumer attitudes, behaviors, and preferences. Researchers conduct focus groups and one-on-one interviews with consumers to gather insights into their experiences and perceptions of products and services.

- Health Care: Qualitative research is used in health care to explore patient experiences and perspectives on health and illness. Researchers conduct in-depth interviews with patients and their families to gather information on their experiences with different health care providers and treatments.

- Education: Qualitative research is used in education to understand student experiences and to develop effective teaching strategies. Researchers conduct classroom observations and interviews with students and teachers to gather insights into classroom dynamics and instructional practices.

- Social Work : Qualitative research is used in social work to explore social problems and to develop interventions to address them. Researchers conduct in-depth interviews with individuals and families to understand their experiences with poverty, discrimination, and other social problems.

- Anthropology : Qualitative research is used in anthropology to understand different cultures and societies. Researchers conduct ethnographic studies and observe and interview members of different cultural groups to gain insights into their beliefs, practices, and social structures.

- Psychology : Qualitative research is used in psychology to understand human behavior and mental processes. Researchers conduct in-depth interviews with individuals to explore their thoughts, feelings, and experiences.

- Public Policy : Qualitative research is used in public policy to explore public attitudes and to inform policy decisions. Researchers conduct focus groups and one-on-one interviews with members of the public to gather insights into their perspectives on different policy issues.

How to Conduct Qualitative Research

Here are some general steps for conducting qualitative research:

- Identify your research question: Qualitative research starts with a research question or set of questions that you want to explore. This question should be focused and specific, but also broad enough to allow for exploration and discovery.

- Select your research design: There are different types of qualitative research designs, including ethnography, case study, grounded theory, and phenomenology. You should select a design that aligns with your research question and that will allow you to gather the data you need to answer your research question.