Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

6 Week 5 Introduction to Hypothesis Testing Reading

An introduction to hypothesis testing.

What are you interested in learning about? Perhaps you’d like to know if there is a difference in average final grade between two different versions of a college class? Does the Fort Lewis women’s soccer team score more goals than the national Division II women’s average? Which outdoor sport do Fort Lewis students prefer the most? Do the pine trees on campus differ in mean height from the aspen trees? For all of these questions, we can collect a sample, analyze the data, then make a statistical inference based on the analysis. This means determining whether we have enough evidence to reject our null hypothesis (what was originally assumed to be true, until we prove otherwise). The process is called hypothesis testing .

A really good Khan Academy video to introduce the hypothesis test process: Khan Academy Hypothesis Testing . As you watch, please don’t get caught up in the calculations, as we will use SPSS to do these calculations. We will also use SPSS p-values, instead of the referenced Z-table, to make statistical decisions.

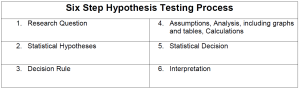

The Six-Step Process

Hypothesis testing requires very specific, detailed steps. Think of it as a mathematical lab report where you have to write out your work in a particular way. There are six steps that we will follow for ALL of the hypothesis tests that we learn this semester.

1. Research Question

All hypothesis tests start with a research question. This is literally a question that includes what you are trying to prove, like the examples earlier: Which outdoor sport do Fort Lewis students prefer the most? Is there sufficient evidence to show that the Fort Lewis women’s soccer team scores more goals than the national Division 2 women’s average?

In this step, besides literally being a question, you’ll want to include:

- mention of your variable(s)

- wording specific to the type of test that you’ll be conducting (mean, mean difference, relationship, pattern)

- specific wording that indicates directionality (are you looking for a ‘difference’, are you looking for something to be ‘more than’ or ‘less than’ something else, or are you comparing one pattern to another?)

Consider this research question: Do the pine trees on campus differ in mean height from the aspen trees?

- The wording of this research question clearly mentions the variables being studied. The independent variable is the type of tree (pine or aspen), and these trees are having their heights compared, so the dependent variable is height.

- ‘Mean’ is mentioned, so this indicates a test with a quantitative dependent variable.

- The question also asks if the tree heights ‘differ’. This specific word indicates that the test being performed is a two-tailed (i.e. non-directional) test. More about the meaning of one/two-tailed will come later.

2. Statistical Hypotheses

A statistical hypothesis test has a null hypothesis, the status quo, what we assume to be true. Notation is H 0, read as “H naught”. The alternative hypothesis is what you are trying to prove (mentioned in your research question), H 1 or H A . All hypothesis tests must include a null and an alternative hypothesis. We also note which hypothesis test is being done in this step.

The notation for your statistical hypotheses will vary depending on the type of test that you’re doing. Writing statistical hypotheses is NOT the same as most scientific hypotheses. You are not writing sentences explaining what you think will happen in the study. Here is an example of what statistical hypotheses look like using the research question: Do the pine trees on campus differ in mean height from the aspen trees?

3. Decision Rule

In this step, you state which alpha value you will use, and when appropriate, the directionality, or tail, of the test. You also write a statement: “I will reject the null hypothesis if p < alpha” (insert actual alpha value here). In this introductory class, alpha is the level of significance, how willing we are to make the wrong statistical decision, and it will be set to 0.05 or 0.01.

Example of a Decision Rule:

Let alpha=0.01, two-tailed. I will reject the null hypothesis if p<0.01.

4. Assumptions, Analysis and Calculations

Quite a bit goes on in this step. Assumptions for the particular hypothesis test must be done. SPSS will be used to create appropriate graphs, and test output tables. Where appropriate, calculations of the test’s effect size will also be done in this step.

All hypothesis tests have assumptions that we hope to meet. For example, tests with a quantitative dependent variable consider a histogram(s) to check if the distribution is normal, and whether there are any obvious outliers. Each hypothesis test has different assumptions, so it is important to pay attention to the specific test’s requirements.

Required SPSS output will also depend on the test.

5. Statistical Decision

It is in Step 5 that we determine if we have enough statistical evidence to reject our null hypothesis. We will consult the SPSS p-value and compare to our chosen alpha (from Step 3: Decision Rule).

Put very simply, the p -value is the probability that, if the null hypothesis is true, the results from another randomly selected sample will be as extreme or more extreme as the results obtained from the given sample. The p -value can also be thought of as the probability that the results (from the sample) that we are seeing are solely due to chance. This concept will be discussed in much further detail in the class notes.

Based on this numerical comparison between the p-value and alpha, we’ll either reject or retain our null hypothesis. Note: You may NEVER ‘accept’ the null hypothesis. This is because it is impossible to prove a null hypothesis to be true.

Retaining the null means that you just don’t have enough evidence to prove your alternative hypothesis to be true, so you fall back to your null. (You retain the null when p is greater than or equal to alpha.)

Rejecting the null means that you did find enough evidence to prove your alternative hypothesis as true. (You reject the null when p is less than alpha.)

Example of a Statistical Decision:

Retain the null hypothesis, because p=0.12 > alpha=0.01.

The p-value will come from SPSS output, and the alpha will have already been determined back in Step 3. You must be very careful when you compare the decimal values of the p-value and alpha. If, for example, you mistakenly think that p=0.12 < alpha=0.01, then you will make the incorrect statistical decision, which will likely lead to an incorrect interpretation of the study’s findings.

6. Interpretation

The interpretation is where you write up your findings. The specifics will vary depending on the type of hypothesis test you performed, but you will always include a plain English, contextual conclusion of what your study found (i.e. what it means to reject or retain the null hypothesis in that particular study). You’ll have statistics that you quote to support your decision. Some of the statistics will need to be written in APA style citation (the American Psychological Association style of citation). For some hypothesis tests, you’ll also include an interpretation of the effect size.

Some hypothesis tests will also require an additional (non-Parametric) test after the completion of your original test, if the test’s assumptions have not been met. These tests are also call “Post-Hoc tests”.

As previously stated, hypothesis testing is a very detailed process. Do not be concerned if you have read through all of the steps above, and have many questions (and are possibly very confused). It will take time, and a lot of practice to learn and apply these steps!

This Reading is just meant as an overview of hypothesis testing. Much more information is forthcoming in the various sets of Notes about the specifics needed in each of these steps. The Hypothesis Test Checklist will be a critical resource for you to refer to during homeworks and tests.

Student Course Learning Objectives

4. Choose, administer and interpret the correct tests based on the situation, including identification of appropriate sampling and potential errors

c. Choose the appropriate hypothesis test given a situation

d. Describe the meaning and uses of alpha and p-values

e. Write the appropriate null and alternative hypotheses, including whether the alternative should be one-sided or two-sided

f. Determine and calculate the appropriate test statistic (e.g. z-test, multiple t-tests, Chi-Square, ANOVA)

g. Determine and interpret effect sizes.

h. Interpret results of a hypothesis test

- Use technology in the statistical analysis of data

- Communicate in writing the results of statistical analyses of data

Attributions

Adapted from “Week 5 Introduction to Hypothesis Testing Reading” by Sherri Spriggs and Sandi Dang is licensed under CC BY-NC-SA 4.0 .

Math 132 Introduction to Statistics Readings Copyright © by Sherri Spriggs is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License , except where otherwise noted.

Share This Book

- Data Science

- Data Analysis

- Data Visualization

- Machine Learning

- Deep Learning

- Computer Vision

- Artificial Intelligence

- AI ML DS Interview Series

- AI ML DS Projects series

- Data Engineering

- Web Scrapping

Understanding Hypothesis Testing

Hypothesis testing involves formulating assumptions about population parameters based on sample statistics and rigorously evaluating these assumptions against empirical evidence. This article sheds light on the significance of hypothesis testing and the critical steps involved in the process.

What is Hypothesis Testing?

A hypothesis is an assumption or idea, specifically a statistical claim about an unknown population parameter. For example, a judge assumes a person is innocent and verifies this by reviewing evidence and hearing testimony before reaching a verdict.

Hypothesis testing is a statistical method that is used to make a statistical decision using experimental data. Hypothesis testing is basically an assumption that we make about a population parameter. It evaluates two mutually exclusive statements about a population to determine which statement is best supported by the sample data.

To test the validity of the claim or assumption about the population parameter:

- A sample is drawn from the population and analyzed.

- The results of the analysis are used to decide whether the claim is true or not.

Example: You say an average height in the class is 30 or a boy is taller than a girl. All of these is an assumption that we are assuming, and we need some statistical way to prove these. We need some mathematical conclusion whatever we are assuming is true.

Defining Hypotheses

- Null hypothesis (H 0 ): In statistics, the null hypothesis is a general statement or default position that there is no relationship between two measured cases or no relationship among groups. In other words, it is a basic assumption or made based on the problem knowledge. Example : A company’s mean production is 50 units/per da H 0 : [Tex]\mu [/Tex] = 50.

- Alternative hypothesis (H 1 ): The alternative hypothesis is the hypothesis used in hypothesis testing that is contrary to the null hypothesis. Example: A company’s production is not equal to 50 units/per day i.e. H 1 : [Tex]\mu [/Tex] [Tex]\ne [/Tex] 50.

Key Terms of Hypothesis Testing

- Level of significance : It refers to the degree of significance in which we accept or reject the null hypothesis. 100% accuracy is not possible for accepting a hypothesis, so we, therefore, select a level of significance that is usually 5%. This is normally denoted with [Tex]\alpha[/Tex] and generally, it is 0.05 or 5%, which means your output should be 95% confident to give a similar kind of result in each sample.

- P-value: The P value , or calculated probability, is the probability of finding the observed/extreme results when the null hypothesis(H0) of a study-given problem is true. If your P-value is less than the chosen significance level then you reject the null hypothesis i.e. accept that your sample claims to support the alternative hypothesis.

- Test Statistic: The test statistic is a numerical value calculated from sample data during a hypothesis test, used to determine whether to reject the null hypothesis. It is compared to a critical value or p-value to make decisions about the statistical significance of the observed results.

- Critical value : The critical value in statistics is a threshold or cutoff point used to determine whether to reject the null hypothesis in a hypothesis test.

- Degrees of freedom: Degrees of freedom are associated with the variability or freedom one has in estimating a parameter. The degrees of freedom are related to the sample size and determine the shape.

Why do we use Hypothesis Testing?

Hypothesis testing is an important procedure in statistics. Hypothesis testing evaluates two mutually exclusive population statements to determine which statement is most supported by sample data. When we say that the findings are statistically significant, thanks to hypothesis testing.

One-Tailed and Two-Tailed Test

One tailed test focuses on one direction, either greater than or less than a specified value. We use a one-tailed test when there is a clear directional expectation based on prior knowledge or theory. The critical region is located on only one side of the distribution curve. If the sample falls into this critical region, the null hypothesis is rejected in favor of the alternative hypothesis.

One-Tailed Test

There are two types of one-tailed test:

- Left-Tailed (Left-Sided) Test: The alternative hypothesis asserts that the true parameter value is less than the null hypothesis. Example: H 0 : [Tex]\mu \geq 50 [/Tex] and H 1 : [Tex]\mu < 50 [/Tex]

- Right-Tailed (Right-Sided) Test : The alternative hypothesis asserts that the true parameter value is greater than the null hypothesis. Example: H 0 : [Tex]\mu \leq50 [/Tex] and H 1 : [Tex]\mu > 50 [/Tex]

Two-Tailed Test

A two-tailed test considers both directions, greater than and less than a specified value.We use a two-tailed test when there is no specific directional expectation, and want to detect any significant difference.

Example: H 0 : [Tex]\mu = [/Tex] 50 and H 1 : [Tex]\mu \neq 50 [/Tex]

To delve deeper into differences into both types of test: Refer to link

What are Type 1 and Type 2 errors in Hypothesis Testing?

In hypothesis testing, Type I and Type II errors are two possible errors that researchers can make when drawing conclusions about a population based on a sample of data. These errors are associated with the decisions made regarding the null hypothesis and the alternative hypothesis.

- Type I error: When we reject the null hypothesis, although that hypothesis was true. Type I error is denoted by alpha( [Tex]\alpha [/Tex] ).

- Type II errors : When we accept the null hypothesis, but it is false. Type II errors are denoted by beta( [Tex]\beta [/Tex] ).

Null Hypothesis is True | Null Hypothesis is False | |

|---|---|---|

Null Hypothesis is True (Accept) | Correct Decision | Type II Error (False Negative) |

Alternative Hypothesis is True (Reject) | Type I Error (False Positive) | Correct Decision |

How does Hypothesis Testing work?

Step 1: define null and alternative hypothesis.

State the null hypothesis ( [Tex]H_0 [/Tex] ), representing no effect, and the alternative hypothesis ( [Tex]H_1 [/Tex] ), suggesting an effect or difference.

We first identify the problem about which we want to make an assumption keeping in mind that our assumption should be contradictory to one another, assuming Normally distributed data.

Step 2 – Choose significance level

Select a significance level ( [Tex]\alpha [/Tex] ), typically 0.05, to determine the threshold for rejecting the null hypothesis. It provides validity to our hypothesis test, ensuring that we have sufficient data to back up our claims. Usually, we determine our significance level beforehand of the test. The p-value is the criterion used to calculate our significance value.

Step 3 – Collect and Analyze data.

Gather relevant data through observation or experimentation. Analyze the data using appropriate statistical methods to obtain a test statistic.

Step 4-Calculate Test Statistic

The data for the tests are evaluated in this step we look for various scores based on the characteristics of data. The choice of the test statistic depends on the type of hypothesis test being conducted.

There are various hypothesis tests, each appropriate for various goal to calculate our test. This could be a Z-test , Chi-square , T-test , and so on.

- Z-test : If population means and standard deviations are known. Z-statistic is commonly used.

- t-test : If population standard deviations are unknown. and sample size is small than t-test statistic is more appropriate.

- Chi-square test : Chi-square test is used for categorical data or for testing independence in contingency tables

- F-test : F-test is often used in analysis of variance (ANOVA) to compare variances or test the equality of means across multiple groups.

We have a smaller dataset, So, T-test is more appropriate to test our hypothesis.

T-statistic is a measure of the difference between the means of two groups relative to the variability within each group. It is calculated as the difference between the sample means divided by the standard error of the difference. It is also known as the t-value or t-score.

Step 5 – Comparing Test Statistic:

In this stage, we decide where we should accept the null hypothesis or reject the null hypothesis. There are two ways to decide where we should accept or reject the null hypothesis.

Method A: Using Crtical values

Comparing the test statistic and tabulated critical value we have,

- If Test Statistic>Critical Value: Reject the null hypothesis.

- If Test Statistic≤Critical Value: Fail to reject the null hypothesis.

Note: Critical values are predetermined threshold values that are used to make a decision in hypothesis testing. To determine critical values for hypothesis testing, we typically refer to a statistical distribution table , such as the normal distribution or t-distribution tables based on.

Method B: Using P-values

We can also come to an conclusion using the p-value,

- If the p-value is less than or equal to the significance level i.e. ( [Tex]p\leq\alpha [/Tex] ), you reject the null hypothesis. This indicates that the observed results are unlikely to have occurred by chance alone, providing evidence in favor of the alternative hypothesis.

- If the p-value is greater than the significance level i.e. ( [Tex]p\geq \alpha[/Tex] ), you fail to reject the null hypothesis. This suggests that the observed results are consistent with what would be expected under the null hypothesis.

Note : The p-value is the probability of obtaining a test statistic as extreme as, or more extreme than, the one observed in the sample, assuming the null hypothesis is true. To determine p-value for hypothesis testing, we typically refer to a statistical distribution table , such as the normal distribution or t-distribution tables based on.

Step 7- Interpret the Results

At last, we can conclude our experiment using method A or B.

Calculating test statistic

To validate our hypothesis about a population parameter we use statistical functions . We use the z-score, p-value, and level of significance(alpha) to make evidence for our hypothesis for normally distributed data .

1. Z-statistics:

When population means and standard deviations are known.

[Tex]z = \frac{\bar{x} – \mu}{\frac{\sigma}{\sqrt{n}}}[/Tex]

- [Tex]\bar{x} [/Tex] is the sample mean,

- μ represents the population mean,

- σ is the standard deviation

- and n is the size of the sample.

2. T-Statistics

T test is used when n<30,

t-statistic calculation is given by:

[Tex]t=\frac{x̄-μ}{s/\sqrt{n}} [/Tex]

- t = t-score,

- x̄ = sample mean

- μ = population mean,

- s = standard deviation of the sample,

- n = sample size

3. Chi-Square Test

Chi-Square Test for Independence categorical Data (Non-normally distributed) using:

[Tex]\chi^2 = \sum \frac{(O_{ij} – E_{ij})^2}{E_{ij}}[/Tex]

- [Tex]O_{ij}[/Tex] is the observed frequency in cell [Tex]{ij} [/Tex]

- i,j are the rows and columns index respectively.

- [Tex]E_{ij}[/Tex] is the expected frequency in cell [Tex]{ij}[/Tex] , calculated as : [Tex]\frac{{\text{{Row total}} \times \text{{Column total}}}}{{\text{{Total observations}}}}[/Tex]

Real life Examples of Hypothesis Testing

Let’s examine hypothesis testing using two real life situations,

Case A: D oes a New Drug Affect Blood Pressure?

Imagine a pharmaceutical company has developed a new drug that they believe can effectively lower blood pressure in patients with hypertension. Before bringing the drug to market, they need to conduct a study to assess its impact on blood pressure.

- Before Treatment: 120, 122, 118, 130, 125, 128, 115, 121, 123, 119

- After Treatment: 115, 120, 112, 128, 122, 125, 110, 117, 119, 114

Step 1 : Define the Hypothesis

- Null Hypothesis : (H 0 )The new drug has no effect on blood pressure.

- Alternate Hypothesis : (H 1 )The new drug has an effect on blood pressure.

Step 2: Define the Significance level

Let’s consider the Significance level at 0.05, indicating rejection of the null hypothesis.

If the evidence suggests less than a 5% chance of observing the results due to random variation.

Step 3 : Compute the test statistic

Using paired T-test analyze the data to obtain a test statistic and a p-value.

The test statistic (e.g., T-statistic) is calculated based on the differences between blood pressure measurements before and after treatment.

t = m/(s/√n)

- m = mean of the difference i.e X after, X before

- s = standard deviation of the difference (d) i.e d i = X after, i − X before,

- n = sample size,

then, m= -3.9, s= 1.8 and n= 10

we, calculate the , T-statistic = -9 based on the formula for paired t test

Step 4: Find the p-value

The calculated t-statistic is -9 and degrees of freedom df = 9, you can find the p-value using statistical software or a t-distribution table.

thus, p-value = 8.538051223166285e-06

Step 5: Result

- If the p-value is less than or equal to 0.05, the researchers reject the null hypothesis.

- If the p-value is greater than 0.05, they fail to reject the null hypothesis.

Conclusion: Since the p-value (8.538051223166285e-06) is less than the significance level (0.05), the researchers reject the null hypothesis. There is statistically significant evidence that the average blood pressure before and after treatment with the new drug is different.

Python Implementation of Case A

Let’s create hypothesis testing with python, where we are testing whether a new drug affects blood pressure. For this example, we will use a paired T-test. We’ll use the scipy.stats library for the T-test.

Scipy is a mathematical library in Python that is mostly used for mathematical equations and computations.

We will implement our first real life problem via python,

import numpy as np from scipy import stats # Data before_treatment = np . array ([ 120 , 122 , 118 , 130 , 125 , 128 , 115 , 121 , 123 , 119 ]) after_treatment = np . array ([ 115 , 120 , 112 , 128 , 122 , 125 , 110 , 117 , 119 , 114 ]) # Step 1: Null and Alternate Hypotheses # Null Hypothesis: The new drug has no effect on blood pressure. # Alternate Hypothesis: The new drug has an effect on blood pressure. null_hypothesis = "The new drug has no effect on blood pressure." alternate_hypothesis = "The new drug has an effect on blood pressure." # Step 2: Significance Level alpha = 0.05 # Step 3: Paired T-test t_statistic , p_value = stats . ttest_rel ( after_treatment , before_treatment ) # Step 4: Calculate T-statistic manually m = np . mean ( after_treatment - before_treatment ) s = np . std ( after_treatment - before_treatment , ddof = 1 ) # using ddof=1 for sample standard deviation n = len ( before_treatment ) t_statistic_manual = m / ( s / np . sqrt ( n )) # Step 5: Decision if p_value <= alpha : decision = "Reject" else : decision = "Fail to reject" # Conclusion if decision == "Reject" : conclusion = "There is statistically significant evidence that the average blood pressure before and after treatment with the new drug is different." else : conclusion = "There is insufficient evidence to claim a significant difference in average blood pressure before and after treatment with the new drug." # Display results print ( "T-statistic (from scipy):" , t_statistic ) print ( "P-value (from scipy):" , p_value ) print ( "T-statistic (calculated manually):" , t_statistic_manual ) print ( f "Decision: { decision } the null hypothesis at alpha= { alpha } ." ) print ( "Conclusion:" , conclusion )

T-statistic (from scipy): -9.0 P-value (from scipy): 8.538051223166285e-06 T-statistic (calculated manually): -9.0 Decision: Reject the null hypothesis at alpha=0.05. Conclusion: There is statistically significant evidence that the average blood pressure before and after treatment with the new drug is different.

In the above example, given the T-statistic of approximately -9 and an extremely small p-value, the results indicate a strong case to reject the null hypothesis at a significance level of 0.05.

- The results suggest that the new drug, treatment, or intervention has a significant effect on lowering blood pressure.

- The negative T-statistic indicates that the mean blood pressure after treatment is significantly lower than the assumed population mean before treatment.

Case B : Cholesterol level in a population

Data: A sample of 25 individuals is taken, and their cholesterol levels are measured.

Cholesterol Levels (mg/dL): 205, 198, 210, 190, 215, 205, 200, 192, 198, 205, 198, 202, 208, 200, 205, 198, 205, 210, 192, 205, 198, 205, 210, 192, 205.

Populations Mean = 200

Population Standard Deviation (σ): 5 mg/dL(given for this problem)

Step 1: Define the Hypothesis

- Null Hypothesis (H 0 ): The average cholesterol level in a population is 200 mg/dL.

- Alternate Hypothesis (H 1 ): The average cholesterol level in a population is different from 200 mg/dL.

As the direction of deviation is not given , we assume a two-tailed test, and based on a normal distribution table, the critical values for a significance level of 0.05 (two-tailed) can be calculated through the z-table and are approximately -1.96 and 1.96.

The test statistic is calculated by using the z formula Z = [Tex](203.8 – 200) / (5 \div \sqrt{25}) [/Tex] and we get accordingly , Z =2.039999999999992.

Step 4: Result

Since the absolute value of the test statistic (2.04) is greater than the critical value (1.96), we reject the null hypothesis. And conclude that, there is statistically significant evidence that the average cholesterol level in the population is different from 200 mg/dL

Python Implementation of Case B

import scipy.stats as stats import math import numpy as np # Given data sample_data = np . array ( [ 205 , 198 , 210 , 190 , 215 , 205 , 200 , 192 , 198 , 205 , 198 , 202 , 208 , 200 , 205 , 198 , 205 , 210 , 192 , 205 , 198 , 205 , 210 , 192 , 205 ]) population_std_dev = 5 population_mean = 200 sample_size = len ( sample_data ) # Step 1: Define the Hypotheses # Null Hypothesis (H0): The average cholesterol level in a population is 200 mg/dL. # Alternate Hypothesis (H1): The average cholesterol level in a population is different from 200 mg/dL. # Step 2: Define the Significance Level alpha = 0.05 # Two-tailed test # Critical values for a significance level of 0.05 (two-tailed) critical_value_left = stats . norm . ppf ( alpha / 2 ) critical_value_right = - critical_value_left # Step 3: Compute the test statistic sample_mean = sample_data . mean () z_score = ( sample_mean - population_mean ) / \ ( population_std_dev / math . sqrt ( sample_size )) # Step 4: Result # Check if the absolute value of the test statistic is greater than the critical values if abs ( z_score ) > max ( abs ( critical_value_left ), abs ( critical_value_right )): print ( "Reject the null hypothesis." ) print ( "There is statistically significant evidence that the average cholesterol level in the population is different from 200 mg/dL." ) else : print ( "Fail to reject the null hypothesis." ) print ( "There is not enough evidence to conclude that the average cholesterol level in the population is different from 200 mg/dL." )

Reject the null hypothesis. There is statistically significant evidence that the average cholesterol level in the population is different from 200 mg/dL.

Limitations of Hypothesis Testing

- Although a useful technique, hypothesis testing does not offer a comprehensive grasp of the topic being studied. Without fully reflecting the intricacy or whole context of the phenomena, it concentrates on certain hypotheses and statistical significance.

- The accuracy of hypothesis testing results is contingent on the quality of available data and the appropriateness of statistical methods used. Inaccurate data or poorly formulated hypotheses can lead to incorrect conclusions.

- Relying solely on hypothesis testing may cause analysts to overlook significant patterns or relationships in the data that are not captured by the specific hypotheses being tested. This limitation underscores the importance of complimenting hypothesis testing with other analytical approaches.

Hypothesis testing stands as a cornerstone in statistical analysis, enabling data scientists to navigate uncertainties and draw credible inferences from sample data. By systematically defining null and alternative hypotheses, choosing significance levels, and leveraging statistical tests, researchers can assess the validity of their assumptions. The article also elucidates the critical distinction between Type I and Type II errors, providing a comprehensive understanding of the nuanced decision-making process inherent in hypothesis testing. The real-life example of testing a new drug’s effect on blood pressure using a paired T-test showcases the practical application of these principles, underscoring the importance of statistical rigor in data-driven decision-making.

Frequently Asked Questions (FAQs)

1. what are the 3 types of hypothesis test.

There are three types of hypothesis tests: right-tailed, left-tailed, and two-tailed. Right-tailed tests assess if a parameter is greater, left-tailed if lesser. Two-tailed tests check for non-directional differences, greater or lesser.

2.What are the 4 components of hypothesis testing?

Null Hypothesis ( [Tex]H_o [/Tex] ): No effect or difference exists. Alternative Hypothesis ( [Tex]H_1 [/Tex] ): An effect or difference exists. Significance Level ( [Tex]\alpha [/Tex] ): Risk of rejecting null hypothesis when it’s true (Type I error). Test Statistic: Numerical value representing observed evidence against null hypothesis.

3.What is hypothesis testing in ML?

Statistical method to evaluate the performance and validity of machine learning models. Tests specific hypotheses about model behavior, like whether features influence predictions or if a model generalizes well to unseen data.

4.What is the difference between Pytest and hypothesis in Python?

Pytest purposes general testing framework for Python code while Hypothesis is a Property-based testing framework for Python, focusing on generating test cases based on specified properties of the code.

Please Login to comment...

Similar reads.

- data-science

- Best 10 IPTV Service Providers in Germany

- Python 3.13 Releases | Enhanced REPL for Developers

- IPTV Anbieter in Deutschland - Top IPTV Anbieter Abonnements

- Best SSL Certificate Providers in 2024 (Free & Paid)

- Content Improvement League 2024: From Good To A Great Article

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

Data Science Central

- Author Portal

- 3D Printing

- AI Data Stores

- AI Hardware

- AI Linguistics

- AI User Interfaces and Experience

- AI Visualization

- Cloud and Edge

- Cognitive Computing

- Containers and Virtualization

- Data Science

- Data Security

- Digital Factoring

- Drones and Robot AI

- Internet of Things

- Knowledge Engineering

- Machine Learning

- Quantum Computing

- Robotic Process Automation

- The Mathematics of AI

- Tools and Techniques

- Virtual Reality and Gaming

- Blockchain & Identity

- Business Agility

- Business Analytics

- Data Lifecycle Management

- Data Privacy

- Data Strategist

- Data Trends

- Digital Communications

- Digital Disruption

- Digital Professional

- Digital Twins

- Digital Workplace

- Marketing Tech

- Sustainability

- Agriculture and Food AI

- AI and Science

- AI in Government

- Autonomous Vehicles

- Education AI

- Energy Tech

- Financial Services AI

- Healthcare AI

- Logistics and Supply Chain AI

- Manufacturing AI

- Mobile and Telecom AI

- News and Entertainment AI

- Smart Cities

- Social Media and AI

- Functional Languages

- Other Languages

- Query Languages

- Web Languages

- Education Spotlight

- Newsletters

- O’Reilly Media

An introduction to Statistical Inference and Hypothesis testing

- March 22, 2020 at 10:22 am

In a previous blog ( The difference between statistics and data science ), I discussed the significance of statistical inference. In this section, we expand on these ideas

The goal of statistical inference is to make a statement about something that is not observed within a certain level of uncertainty. Inference is difficult because it is based on a sample i.e. the objective is to understand the population based on the sample . The population is a collection of objects that we want to study/test. For example, if you are studying quality of products from an assembly line for a given day, then the whole production for that day is the population. In the real world, it may be hard to test every product – hence we draw a sample from the population and infer the results based on the sample for the whole population.

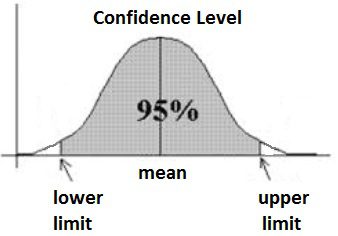

In this sense, the statistical model provides an abstract representation of the population and how the elements of the population relate to each other. Parameters are numbers that represent features or associations of the population. We estimate the value of the parameters from the data. A parameter represents a s ummary description of a fixed characteristic or measure of the target population. It represents the true value that would be obtained as if we had taken a census (instead of a sample). Examples of parameters include Mean (μ), Variance (σ²), Standard Deviation (σ), Proportion (π). These values are individually called a statistic. A Sampling Distribution is a probability distribution of a statistic obtained through a large number of samples drawn from the population. In sampling, the confidence interval provides a more continuous measure of un-certainty. The confidence interval proposes a range of plausible values for an unknown parameter (for example, the mean). In other words, the confidence interval represents a range of values we are fairly sure our true value lies in. For example, for a given sample group, the mean height is 175 cms and if the confidence interval is 95%, then it means, 95% of similar experiments will include the true mean, but 5% will not contain the sample.

Image source and reference: An introduction to confidence intervals

Hypothesis testing

Having understood sampling and inference, let us now explore hypothesis testing. Hypothesis testing enables us to make claims about the distribution of data or whether one set of results are different from another set of results. Hypothesis testing allows us to interpret or draw conclusions about the population using sample data. In a hypothesis test, we evaluate two mutually exclusive statements about a population to determine which statement is best supported by the sample data. The Null Hypothesis(H0) is a statement of no change and is assumed to be true unless evidence indicates otherwise. The Null hypothesis is the one we want to disprove. The Alternative Hypothesis: (H1 or Ha) is the opposite of the null hypothesis, represents the claim that is being testing. We are trying to collect evidence in favour of the alternative hypothesis. The Probability value (P-Value) represents the probability that the null hypothesis is true based on the current sample or one that is more extreme than the current sample. The Significance Level (α) defines a cut-off p-value for how strongly a sample contradicts the null hypothesis of the experiment. If P-Value < α, then there is sufficient evidence to reject the null hypothesis and accept the alternative hypothesis. If P-Value > α, we fail to reject the null hypothesis.

Central limit theorem

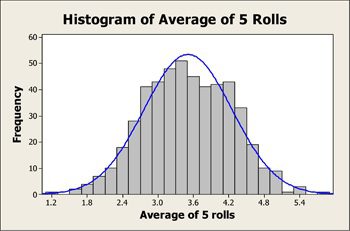

The central limit theorem is at the heart of hypothesis testing. Given a sample where the statistics of the population is unknowable, we need a way to infer statistics across the population. For example, if we want to know the average weight of all the dogs in the world, it is not possible to weigh up each dog and compute the mean. So, we use the central limit theorem and the confidence interval which enables to infer the mean of the population within a certain margin.

So, if we take multiple samples – say the first sample of 40 dogs and compute of the mean for that sample. Again, we take a next sample of say 50 dogs and do the same. We repeat the process by getting a large number of random samples which are independent of each other – then the ‘mean of the means’ of these samples will give the approximate mean of the whole population as per the central limit theorem. Also, the histogram of the means will represent the bell curve as per the central limit theorem. The central limit theorem is significant because this idea applies to an unknown distribution (ex: Binomial or even a completely random distribution) – which means techniques like hypothesis testing can apply to any distribution (not just the normal distribution)

Image source: minitab .

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

Related Content

- Study Abroad Get upto 50% discount on Visa Fees

- Top Universities & Colleges

- Abroad Exams

- Top Courses

- Read College Reviews

- Admission Alerts 2024

- Education Loan

- Institute (Counselling, Coaching and More)

- Ask a Question

- College Predictor

- Test Series

- Practice Questions

- Course Finder

- Scholarship

- All Courses

- B.Sc (Nursing)

Statistical Inference: Types, Procedure & Examples

Arpita Srivastava

Content Writer

Statistical inference is defined as the process of analysing data and drawing conclusions based on random variation. Hypothesis testing and confidence intervals are two applications of statistical inference.

- Statistical inference is a technique that uses random sampling to make decisions about the parameters of a population.

- The method is based on the concept of probability distribution.

- It allows us to evaluate the relationship between the dependent and independent variables.

- Statistical inference aims to estimate the uncertainty or variation from sample to sample.

- It enables us to provide a range of value for something's true worth in the population.

- The process involves the collection of quantitative data.

- Descriptive Statistics and Inferential Statistics are two types of Statistical inference.

- Polling performed during the election is a real-life example of the inference.

The components used in the statistical inference are as follows:

- Size of the sample

- Sample Size

- Variability in the sample

|

|

Key Terms: Statistical Inference, Probability Distribution, Independent Variables, Dependent Variable, Descriptive Statistics, Inferential Statistics, Bivariate Regression, Multivariate Regression, Anova or T-test, Chi-Square Statistic, Contingency Table

Types of Statistical Inference

[Click Here for Sample Questions]

Different types of statistical inference which are used to draw conclusions are as follows:

Pearson Correlation Coefficient

Pearson Correlation Coefficient is a type of coefficient that specifies the ratio between the covariance of two variables and the product of their standard deviations.

- The value ranges between -1 to 1.

- It can be mathematically represented as:

p x,y = cov (x, y) / σ x σ y

Bivariate Regression

Bivariate Regression Analysis is a form of analysis that specifies the relationship between two variables. It is used for testing the hypothesis of variables. The analysis determines the strength of the relationship between variables.

- One variable acts as the dependent variable, and the other variable acts as the independent variable.

- Bivariate Regression for linear variables are as follows:

y = β 0 + β 1 x + ε

Multivariate Regression

Multivariate Regression is a type of regression that specifies the degree to which more that one dependent variables are related to independent variable. It is the simplest Machine Learning Algorithm.

- Multivariate Regression can mathematically be represented as:

Y = β 0 + β 1 X 1 + β k X k + residual

Anova also known as analysis of variance is a statistical model that is used to determine the relationship between independent variables and the dependent variable.

T-test is an important method is used in the field of statistical inference that determine the difference between the means of two groups. It determine the relationship between two grouped data.

- It can be represented as:

t = m – μ / (s/ √n)

However, the most common and widely used types of statistical inference are

- Interval of Confidence

- Validation of hypotheses

Read More:

|

|

|

|

Statistical Inference Procedure

The Statistical Inference Procedure includes the steps listed below which are as follows:

- Firstly, start with a theory.

- In the next step, create a research hypothesis.

- Put the variables into action.

- Then , recognize the population to which the findings should be applied.

- Create a null hypothesis for this population.

- Begin the study by gathering a sample of children from the general population .

To reject the null hypothesis, use the statistical test to see if the collected sample properties differ from what is expected under the null hypothesis.

Statistical Inference

Statistical Interference Solution

Statistical inference solutions make effective use of statistical data in relation to a group of individuals or trials. It deals with every character, including data collection, investigation, and analysis, as well as data collection organization.

- People can gain knowledge after starting work in a variety of fields by using statistical inference solutions.

The following are some statistical inference solution facts:

- It is a common method for predicting whether the observed samples are independent of a specific population type.

- The method includes poisson or normal distribution methods.

The statistical inference solution aids in evaluating the expected model's parameter(s), such as normal mean or binomial proportion.

Importance of Statistical Inference

The importance of statistical inference is that it helps examine the data properly. To develop an effective solution, accurate data analysis is required to interpret the research findings.

- Inferential statistics is used to forecast the future based on a variety of observations from various fields.

- It allows us to draw conclusions about the data.

- The method also assists us in providing a likely range of values for the true value of something in the population.

Statistical inference is used in a variety of fields, including:

- Business Analysis

- Artificial Intelligence

- Financial Analysis

- Fraud Detection

- Machine Learning

- Pharmaceutical Sector

- Share market.

| Class 12 Mathematics Related Concept | ||

|---|---|---|

Things to Remember

- Statistical inference is used to test hypotheses and calculate confidence intervals.

- It is also referred to as inferential statistics.

- The method consists of analyzing and drawing conclusions from data that is subject to random variation.

- It employs a variety of statistical analysis techniques to reach a conclusion about the population.

- In statistics, descriptive statistics are used to describe data.

- On the other hand, inferential statistics are used to make predictions based on the data.

- Inferential statistics uses data from a sample to generalize to the entire population.

- The students can take help from Class 12 Mathematics Notes .

Sample Questions

Ques: A card is drawn from the shuffled pack of 52 cards. The trial is repeated a total of 400 times, and the different suits of cards are listed below:

|

|

|

|

|

|

|

|

|

|

|

|

What is the likelihood of receiving the following suits if the card is drawn at random? (5 marks)

(A) Diamond Card

(B) Black Card

(C) Except for spade

Ans: Through statistical inference solution.

Total number of events = 400

i.e., 100 + 90+120+90 = 400

(A) The probability of winning a diamond card is:

Total number of trials in which diamond card is drawn= 90

Hence, P(diamond card )= 90/4000

(B) The probability of winning black card is:

Number of trials in which black card appeared = 100 + 90 = 190

Hence, P(black card) = 190./400 = 0.48

(C) Except spade

Number of trials other than spade appeared = 90 + 100 + 120 = 310

Hence, P(except spade) = 310/400 = 0.78

Ques: A bad with two yellow balls, three red balls, and five black balls. From the bag, only one ball is drawn at random. What is the likelihood of drawing the black ball? (3 marks)

Ans: Through statistical inference solution.

- Total number of balls in a bag = 10

- i.e 2 + 3+ 5 = 10

- Number of black balls= 5

- Probability of getting a black ball = Number of black balls/ Total number of balls

Hence, the probability of getting black balls is 1/2.

Ques: A card is drawn at random from a deck of 52. What is the likelihood that the card drawn is a face card (only the Jack, King, and Queen)? (3 marks)

Ans: Total number of cards= 52

- Number of a face card in a pack of 52 cards= 12

- Probability of receiving a face card = 12/52 = 3/13

- Hence, the probability of receiving face card is 3/13

Ques: What exactly is statistics? Describe its various types. (5 marks)

Ans: Statistics is the in-depth study of data collection, organisation, interpretation, and presentation. It is, in other words, a type of mathematical analysis that collects and summarises data. It is used in a variety of fields including business, manufacturing, psychology, government, manufacturing, humanities, and so on.

- Statistics data is gathered through the use of a sample procedure or other methods.

- Descriptive statistics and inferential statistics are the two types of statistical procedures used to analyse data.

- Inferential statistics are used to assess data from a sample using the mean or standard deviation.

- Descriptive statistics are used to assess data from a sample using the mean or standard deviation.

The statistic is divided into two categories. There are two kinds of statics:

- Descriptive Statistics

- Inferential Statistics

Ques: What Is the Purpose of Statistical Inference Training? (3 marks)

Ans: Inferential statistics use data from a sample to draw conclusions about the larger population from which the sample was drawn. The goal of statistical inference is to draw conclusions from a sample and apply them to a large population.

- It employs probability theory to investigate the probabilities of sample characteristics.

- The most commonly used methods are hypothesis tests, analysis of variance, and so on.

Ques: Find the probability of getting an odd number when a die is tossed? (2 marks)

Ans: When a die is tossed there are 6 possible outcomes, S = { 1, 2, 3, 4, 5, 6 }

According to the question, favorable events of getting an even number is { 1,3,5 }

Therefore, no. of favorable event = 3

And the total no. of outcomes = 6

Therefore, the probability of getting an odd number when a die is tossed is 3 / 6 = 1 / 2

Ques: A bad with three yellow balls, three red balls, and six black balls. From the bag, only one ball is drawn at random. What is the likelihood of drawing the yellow ball? (3 marks)

- Total number of balls in a bag = 12

- i.e 3 + 3+ 6 = 12

- Number of yellow balls= 3

- Probability of getting a black ball = Number of yellow balls/ Total number of balls

Hence, the probability of getting yellow balls is 1/4.

Ques: What is the probability of getting a sum of 8 when two dice are thrown? (3 marks)

Ans: There are 36 possibilities when we throw two dice.

The desired outcome is 8. To get 8, we can have three favorable outcomes.

{(4,4),(6,2),(5,3),(3,5), (2,6)}

Probability of an event = number of favorable outcomes/ sample space

Probability of getting number 8 = 5/36

Ques: The correlation coefficient of a set of data is found to be 0.9. The standard deviation of data set x (σ x ) = 1 , and standard deviation of data set y (σ y ) = 1.4. Find out the covariance of the data? (3 marks)

Ans: The relationship between correlation and covariance is given by –

Correlation = p x,y = cov (x, y) / σ x σ y

Values given in the question are,

- Correlation = 0.9

- σ x = 1

- σ y = 1.4

- Therefore, cov(x,y) = 0.9 x 1 x 1.4

Ques: What does a correlation of 0.66 means? ( 2 marks)

Ans: As we know that a correlation of 1 means a perfect positive correlation, thus, a correlation of 0.66 means 66% of the variance in one variable is accounted for by the second variable.

Ques: Find the standard deviation of 4, 9, 11, 15, 17, 5, 8, 12, 10? (4 Marks)

Ans: First find out the mean: 10.11

Now, subtract the mean individually from each of the data set points provided and then square the obtained result, which is equivalent to the (x - μ)² step.

x would refer to the values given in the question.

| X | 4 | 9 | 11 | 15 | 17 | 5 | 8 | 12 | 10 |

| (x - μ)² | 37.33 | 1.23 | 0.79 | 23.91 | 47.47 | 26.11 | 4.45 | 3.57 | 0.0121 |

Now, add up the obtained results (which is the 'sigma' in the formula): 144.87

Now, divide by n. as discussed earlier n is the number of values in the data, so in this example, N is 9. which gives us: 16.09

And lastly, square root it: 4.01

CBSE CLASS XII Related Questions

1. if a'= \(\begin{bmatrix} 3 & 4 \\ -1 & 2 \\ 0 &1 \end{bmatrix}\) \(\begin{bmatrix} -1 & 2 & 1 \\ 1 &2 & 3\end{bmatrix}\) , then verify that (i) \((a+b)'=a'+b' \) (ii) \((a-b)'=a'-b'\), 2. find the vector and the cartesian equations of the lines that pass through the origin and(5,-2,3)., 3. evaluate \(\begin{vmatrix} cos\alpha cos\beta &cos\alpha sin\beta &-sin\alpha \\ -sin\beta&cos\beta &0 \\ sin\alpha cos\beta&sin\alpha\sin\beta &cos\alpha \end{vmatrix}\), 4. let a= \(\begin{bmatrix} 0 & 1 \\ 0 & 0 \end{bmatrix}\) ,show that(ai+ba) n =a n i+na n-1 ba,where i is the identity matrix of order 2 and n∈n, 5. find the inverse of each of the matrices,if it exists \(\begin{bmatrix} 2 & 1 \\ 7 & 4 \end{bmatrix}\), 6. let \(a=\begin{bmatrix}1&-2&1\\ -2&3&1\\ 1&1&5\end{bmatrix}\) verify that - \((i)[adja]^{-1}=adj(a^{-1})\) \((ii)(a^{-1})^{-1}=a\).

Exam Pattern

Paper Analysis

Physics Syllabus

Chemistry Syllabus

Mathematics Syllabus

Biology Syllabus

English Syllabus

Physical Education Syllabus

Computer Science Syllabus

Economics Syllabus

Business Studies Syllabus

Political Science Syllabus

Physics Practical

Chemistry Practical

History Syllabus

Accountancy Syllabus

Chapter Wise Weightage

Geography Syllabus

Biology Practical

Mathematics

SUBSCRIBE TO OUR NEWS LETTER

- Data Science

Hypothesis Testing in Data Science [Types, Process, Example]

Home Blog Data Science Hypothesis Testing in Data Science [Types, Process, Example]

In day-to-day life, we come across a lot of data lot of variety of content. Sometimes the information is too much that we get confused about whether the information provided is correct or not. At that moment, we get introduced to a word called “Hypothesis testing” which helps in determining the proofs and pieces of evidence for some belief or information.

What is Hypothesis Testing?

Hypothesis testing is an integral part of statistical inference. It is used to decide whether the given sample data from the population parameter satisfies the given hypothetical condition. So, it will predict and decide using several factors whether the predictions satisfy the conditions or not. In simpler terms, trying to prove whether the facts or statements are true or not.

For example, if you predict that students who sit on the last bench are poorer and weaker than students sitting on 1st bench, then this is a hypothetical statement that needs to be clarified using different experiments. Another example we can see is implementing new business strategies to evaluate whether they will work for the business or not. All these things are very necessary when you work with data as a data scientist. If you are interested in learning about data science, visit this amazing Data Science full course to learn data science.

How is Hypothesis Testing Used in Data Science?

It is important to know how and where we can use hypothesis testing techniques in the field of data science. Data scientists predict a lot of things in their day-to-day work, and to check the probability of whether that finding is certain or not, we use hypothesis testing. The main goal of hypothesis testing is to gauge how well the predictions perform based on the sample data provided by the population. If you are interested to know more about the applications of the data, then refer to this D ata Scien ce course in India which will give you more insights into application-based things. When data scientists work on model building using various machine learning algorithms, they need to have faith in their models and the forecasting of models. They then provide the sample data to the model for training purposes so that it can provide us with the significance of statistical data that will represent the entire population.

Where and When to Use Hypothesis Test?

Hypothesis testing is widely used when we need to compare our results based on predictions. So, it will compare before and after results. For example, someone claimed that students writing exams from blue pen always get above 90%; now this statement proves it correct, and experiments need to be done. So, the data will be collected based on the student's input, and then the test will be done on the final result later after various experiments and observations on students' marks vs pen used, final conclusions will be made which will determine the results. Now hypothesis testing will be done to compare the 1st and the 2nd result, to see the difference and closeness of both outputs. This is how hypothesis testing is done.

How Does Hypothesis Testing Work in Data Science?

In the whole data science life cycle, hypothesis testing is done in various stages, starting from the initial part, the 1st stage where the EDA, data pre-processing, and manipulation are done. In this stage, we will do our initial hypothesis testing to visualize the outcome in later stages. The next test will be done after we have built our model, once the model is ready and hypothesis testing is done, we will compare the results of the initial testing and the 2nd one to compare the results and significance of the results and to confirm the insights generated from the 1st cycle match with the 2nd one or not. This will help us know how the model responds to the sample training data. As we saw above, hypothesis testing is always needed when we are planning to contrast more than 2 groups. While checking on the results, it is important to check on the flexibility of the results for the sample and the population. Later, we can judge on the disagreement of the results are appropriate or vague. This is all we can do using hypothesis testing.

Different Types of Hypothesis Testing

Hypothesis testing can be seen in several types. In total, we have 5 types of hypothesis testing. They are described below:

1. Alternative Hypothesis

The alternative hypothesis explains and defines the relationship between two variables. It simply indicates a positive relationship between two variables which means they do have a statistical bond. It indicates that the sample observed is going to influence or affect the outcome. An alternative hypothesis is described using H a or H 1 . Ha indicates an alternative hypothesis and H 1 explains the possibility of influenced outcome which is 1. For example, children who study from the beginning of the class have fewer chances to fail. An alternate hypothesis will be accepted once the statistical predictions become significant. The alternative hypothesis can be further divided into 3 parts.

- Left-tailed: Left tailed hypothesis can be expected when the sample value is less than the true value.

- Right-tailed: Right-tailed hypothesis can be expected when the true value is greater than the outcome/predicted value.

- Two-tailed: Two-tailed hypothesis is defined when the true value is not equal to the sample value or the output.

2. Null Hypothesis

The null hypothesis simply states that there is no relation between statistical variables. If the facts presented at the start do not match with the outcomes, then we can say, the testing is null hypothesis testing. The null hypothesis is represented as H 0 . For example, children who study from the beginning of the class have no fewer chances to fail. There are types of Null Hypothesis described below:

Simple Hypothesis: It helps in denoting and indicating the distribution of the population.

Composite Hypothesis: It does not denote the population distribution

Exact Hypothesis: In the exact hypothesis, the value of the hypothesis is the same as the sample distribution. Example- μ= 10

Inexact Hypothesis: Here, the hypothesis values are not equal to the sample. It will denote a particular range of values.

3. Non-directional Hypothesis

The non-directional hypothesis is a tow-tailed hypothesis that indicates the true value does not equal the predicted value. In simpler terms, there is no direction between the 2 variables. For an example of a non-directional hypothesis, girls and boys have different methodologies to solve a problem. Here the example explains that the thinking methodologies of a girl and a boy is different, they don’t think alike.

4. Directional Hypothesis

In the Directional hypothesis, there is a direct relationship between two variables. Here any of the variables influence the other.

5. Statistical Hypothesis

Statistical hypothesis helps in understanding the nature and character of the population. It is a great method to decide whether the values and the data we have with us satisfy the given hypothesis or not. It helps us in making different probabilistic and certain statements to predict the outcome of the population... We have several types of tests which are the T-test, Z-test, and Anova tests.

Methods of Hypothesis Testing

1. frequentist hypothesis testing.

Frequentist hypotheses mostly work with the approach of making predictions and assumptions based on the current data which is real-time data. All the facts are based on current data. The most famous kind of frequentist approach is null hypothesis testing.

2. Bayesian Hypothesis Testing

Bayesian testing is a modern and latest way of hypothesis testing. It is known to be the test that works with past data to predict the future possibilities of the hypothesis. In Bayesian, it refers to the prior distribution or prior probability samples for the observed data. In the medical Industry, we observe that Doctors deal with patients’ diseases using past historical records. So, with this kind of record, it is helpful for them to understand and predict the current and upcoming health conditions of the patient.

Importance of Hypothesis Testing in Data Science

Most of the time, people assume that data science is all about applying machine learning algorithms and getting results, that is true but in addition to the fact that to work in the data science field, one needs to be well versed with statistics as most of the background work in Data science is done through statistics. When we deal with data for pre-processing, manipulating, and analyzing, statistics play. Specifically speaking Hypothesis testing helps in making confident decisions, predicting the correct outcomes, and finding insightful conclusions regarding the population. Hypothesis testing helps us resolve tough things easily. To get more familiar with Hypothesis testing and other prediction models attend the superb useful KnowledgeHut Data Science full course which will give you more domain knowledge and will assist you in working with industry-related projects.

Basic Steps in Hypothesis Testing [Workflow]

1. null and alternative hypothesis.

After we have done our initial research about the predictions that we want to find out if true, it is important to mention whether the hypothesis done is a null hypothesis(H0) or an alternative hypothesis (Ha). Once we understand the type of hypothesis, it will be easy for us to do mathematical research on it. A null hypothesis will usually indicate the no-relationship between the variables whereas an alternative hypothesis describes the relationship between 2 variables.

- H0 – Girls, on average, are not strong as boys

- Ha - Girls, on average are stronger than boys

2. Data Collection

To prove our statistical test validity, it is essential and critical to check the data and proceed with sampling them to get the correct hypothesis results. If the target data is not prepared and ready, it will become difficult to make the predictions or the statistical inference on the population that we are planning to make. It is important to prepare efficient data, so that hypothesis findings can be easy to predict.

3. Selection of an appropriate test statistic

To perform various analyses on the data, we need to choose a statistical test. There are various types of statistical tests available. Based on the wide spread of the data that is variance within the group or how different the data category is from one another that is variance without a group, we can proceed with our further research study.

4. Selection of the appropriate significant level

Once we get the result and outcome of the statistical test, we have to then proceed further to decide whether the reject or accept the null hypothesis. The significance level is indicated by alpha (α). It describes the probability of rejecting or accepting the null hypothesis. Example- Suppose the value of the significance level which is alpha is 0.05. Now, this value indicates the difference from the null hypothesis.

5. Calculation of the test statistics and the p-value

P value is simply the probability value and expected determined outcome which is at least as extreme and close as observed results of a hypothetical test. It helps in evaluating and verifying hypotheses against the sample data. This happens while assuming the null hypothesis is true. The lower the value of P, the higher and better will be the results of the significant value which is alpha (α). For example, if the P-value is 0.05 or even less than this, then it will be considered statistically significant. The main thing is these values are predicted based on the calculations done by deviating the values between the observed one and referenced one. The greater the difference between values, the lower the p-value will be.

6. Findings of the test

After knowing the P-value and statistical significance, we can determine our results and take the appropriate decision of whether to accept or reject the null hypothesis based on the facts and statistics presented to us.

How to Calculate Hypothesis Testing?

Hypothesis testing can be done using various statistical tests. One is Z-test. The formula for Z-test is given below:

Z = ( x̅ – μ 0 ) / (σ /√n)

In the above equation, x̅ is the sample mean

- μ0 is the population mean

- σ is the standard deviation

- n is the sample size

Now depending on the Z-test result, the examination will be processed further. The result is either going to be a null hypothesis or it is going to be an alternative hypothesis. That can be measured through below formula-

- H0: μ=μ0

- Ha: μ≠μ0

- Here,

- H0 = null hypothesis

- Ha = alternate hypothesis

In this way, we calculate the hypothesis testing and can apply it to real-world scenarios.

Real-World Examples of Hypothesis Testing

Hypothesis testing has a wide variety of use cases that proves to be beneficial for various industries.

1. Healthcare

In the healthcare industry, all the research and experiments which are done to predict the success of any medicine or drug are done successfully with the help of Hypothesis testing.

2. Education sector

Hypothesis testing assists in experimenting with different teaching techniques to deal with the understanding capability of different students.

3. Mental Health

Hypothesis testing helps in indicating the factors that may cause some serious mental health issues.

4. Manufacturing

Testing whether the new change in the process of manufacturing helped in the improvement of the process as well as in the quantity or not. In the same way, there are many other use cases that we get to see in different sectors for hypothesis testing.

Error Terms in Hypothesis Testing

1. type-i error.

Type I error occurs during the process of hypothesis testing when the null hypothesis is rejected even though it is accurate. This kind of error is also known as False positive because even though the statement is positive or correct but results are given as false. For example, an innocent person still goes to jail because he is considered to be guilty.

2. Type-II error

Type II error occurs during the process of hypothesis testing when the null hypothesis is not rejected even though it is inaccurate. This Kind of error is also called a False-negative which means even though the statements are false and inaccurate, it still says it is correct and doesn’t reject it. For example, a person is guilty, but in court, he has been proven innocent where he is guilty, so this is a Type II error.

3. Level of Significance

The level of significance is majorly used to measure the confidence with which a null hypothesis can be rejected. It is the value with which one can reject the null hypothesis which is H0. The level of significance gauges whether the hypothesis testing is significant or not.

P-value stands for probability value, which tells us the probability or likelihood to find the set of observations when the null hypothesis is true using statistical tests. The main purpose is to check the significance of the statistical statement.

5. High P-Values

A higher P-value indicates that the testing is not statistically significant. For example, a P value greater than 0.05 is considered to be having higher P value. A higher P-value also means that our evidence and proofs are not strong enough to influence the population.

In hypothesis testing, each step is responsible for getting the outcomes and the results, whether it is the selection of statistical tests or working on data, each step contributes towards the better consequences of the hypothesis testing. It is always a recommendable step when planning for predicting the outcomes and trying to experiment with the sample; hypothesis testing is a useful concept to apply.

Frequently Asked Questions (FAQs)

We can test a hypothesis by selecting a correct hypothetical test and, based on those getting results.

Many statistical tests are used for hypothetical testing which includes Z-test, T-test, etc.

Hypothesis helps us in doing various experiments and working on a specific research topic to predict the results.

The null and alternative hypothesis, data collection, selecting a statistical test, selecting significance value, calculating p-value, check your findings.

In simple words, parametric tests are purely based on assumptions whereas non-parametric tests are based on data that is collected and acquired from a sample.

Gauri Guglani

Gauri Guglani works as a Data Analyst at Deloitte Consulting. She has done her major in Information Technology and holds great interest in the field of data science. She owns her technical skills as well as managerial skills and also is great at communicating. Since her undergraduate, Gauri has developed a profound interest in writing content and sharing her knowledge through the manual means of blog/article writing. She loves writing on topics affiliated with Statistics, Python Libraries, Machine Learning, Natural Language processes, and many more.

Avail your free 1:1 mentorship session.

Something went wrong

Upcoming Data Science Batches & Dates

| Name | Date | Fee | Know more |

|---|

- Math Article

Statistical Inference

Statistics is a branch of Mathematics, that deals with the collection, analysis, interpretation, and the presentation of the numerical data. In other words, it is defined as the collection of quantitative data. The main purpose of Statistics is to make an accurate conclusion using a limited sample about a greater population.

Types of Statistics

Statistics can be classified into two different categories. The two different types of Statistics are:

- Descriptive Statistics

- Inferential Statistics

In Statistics, descriptive statistics describe the data, whereas inferential statistics help you make predictions from the data. In inferential statistics, the data are taken from the sample and allows you to generalize the population. In general, inference means “guess”, which means making inference about something. So, statistical inference means, making inference about the population. To take a conclusion about the population, it uses various statistical analysis techniques. In this article, one of the types of statistics called inferential statistics is explained in detail. Now, you are going to learn the proper definition of statistical inference, types, solutions, and examples.

Statistical Inference Definition

Statistical inference is the process of analysing the result and making conclusions from data subject to random variation. It is also called inferential statistics. Hypothesis testing and confidence intervals are the applications of the statistical inference. Statistical inference is a method of making decisions about the parameters of a population, based on random sampling. It helps to assess the relationship between the dependent and independent variables. The purpose of statistical inference to estimate the uncertainty or sample to sample variation. It allows us to provide a probable range of values for the true values of something in the population. The components used for making statistical inference are:

- Sample Size

- Variability in the sample

- Size of the observed differences

Types of Statistical Inference

There are different types of statistical inferences that are extensively used for making conclusions. They are:

- One sample hypothesis testing

- Confidence Interval

- Pearson Correlation

- Bi-variate regression

- Multi-variate regression

- Chi-square statistics and contingency table

- ANOVA or T-test

Statistical Inference Procedure

The procedure involved in inferential statistics are:

- Begin with a theory

- Create a research hypothesis

- Operationalize the variables

- Recognize the population to which the study results should apply

- Formulate a null hypothesis for this population

- Accumulate a sample from the population and continue the study

- Conduct statistical tests to see if the collected sample properties are adequately different from what would be expected under the null hypothesis to be able to reject the null hypothesis

Statistical Inference Solution

Statistical inference solutions produce efficient use of statistical data relating to groups of individuals or trials. It deals with all characters, including the collection, investigation and analysis of data and organizing the collected data. By statistical inference solution, people can acquire knowledge after starting their work in diverse fields. Some statistical inference solution facts are:

- It is a common way to assume that the observed sample is of independent observations from a population type like Poisson or normal

- Statistical inference solution is used to evaluate the parameter(s) of the expected model like normal mean or binomial proportion

Importance of Statistical Inference

Inferential Statistics is important to examine the data properly. To make an accurate conclusion, proper data analysis is important to interpret the research results. It is majorly used in the future prediction for various observations in different fields. It helps us to make inference about the data. The statistical inference has a wide range of application in different fields, such as:

- Business Analysis

- Artificial Intelligence

- Financial Analysis

- Fraud Detection

- Machine Learning

- Share Market

- Pharmaceutical Sector

Statistical Inference Examples

An example of statistical inference is given below.

Question: From the shuffled pack of cards, a card is drawn. This trial is repeated for 400 times, and the suits are given below:

| Suit | Spade | Clubs | Hearts | Diamonds |

| No.of times drawn | 90 | 100 | 120 | 90 |

While a card is tried at random, then what is the probability of getting a

- Diamond cards

- Black cards

- Except for spade

By statistical inference solution,

Total number of events = 400

i.e.,90+100+120+90=400

(1) The probability of getting diamond cards:

Number of trials in which diamond card is drawn = 90

Therefore, P(diamond card) = 90/400 = 0.225

(2) The probability of getting black cards:

Number of trials in which black card showed up = 90+100 =190

Therefore, P(black card) = 190/400 = 0.475

(3) Except for spade

Number of trials other than spade showed up = 90+100+120 =310

Therefore, P(except spade) = 310/400 = 0.775

Stay tuned with BYJU’S – The Learning App for more Maths-related concepts and download the app for more personalized videos.

| MATHS Related Links | |

Register with BYJU'S & Download Free PDFs

Register with byju's & watch live videos.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Indian J Crit Care Med

- v.23(Suppl 3); 2019 Sep

An Introduction to Statistics: Understanding Hypothesis Testing and Statistical Errors

Priya ranganathan.

1 Department of Anesthesiology, Critical Care and Pain, Tata Memorial Hospital, Mumbai, Maharashtra, India

2 Department of Surgical Oncology, Tata Memorial Centre, Mumbai, Maharashtra, India

The second article in this series on biostatistics covers the concepts of sample, population, research hypotheses and statistical errors.

How to cite this article

Ranganathan P, Pramesh CS. An Introduction to Statistics: Understanding Hypothesis Testing and Statistical Errors. Indian J Crit Care Med 2019;23(Suppl 3):S230–S231.