Scholar Commons

- < Previous

Home > STUDENT_SCHOLAR > ENG_PHD_THESES > 49

Engineering Ph.D. Theses

Efficient hardware implementation of deep learning networks based on the convolutional neural network.

Anaam Ansari

Date of Award

Document type.

Dissertation

Santa Clara : Santa Clara University, 2023.

Degree Name

Doctor of Philosophy (PhD)

Electrical and Computer Engineering

First Advisor

Tokunbo Ogunfunmi

Image classification, speech processing, autonomous driving, and medical diagnosis have made the adoption of Deep Neural Networks (DNN) mainstream. Many deep networks such as AlexNet, GoogleNet, ResidualNet, MobileNet, YOLOv3 and Transformers have achieved immense success and popularity. However, implementing these deep and complex networks in hardware is a challenging feat. The growing demand of DNN applications in mobile devices and data centers have led the researchers to explore application specific hardware accelerators for DNNs. There have been numerous hardware and software based solutions to improve DNN throughput, latency, performance and accuracy. Any solution for hardware acceleration needs to optimize in a space confined by these metrics. Hardware acceleration of Deep Neural Networks (DNN) is a highly effective and viable solution for running them on mobile devices. The power of DNN is now available at the edge in a compact and power-efficient form factor because of hardware acceleration.

In this thesis, we introduce a novel architecture that uses a generalized method called Single Input Partial Product 2-Dimensional Convolution (SIPP2D Convolution) which calculates a 2-D convolution in a fast and expedient manner. We present the exploration designs that have culminated into SIPP2D and emphasize its benefits. SIPP2D architecture prevents the re-fetching of input weights for the calculation of partial products. It can calculate the output of any input size and kernel size with a low memory-traffic while maintaining a low latency and high throughput compared to other popular techniques. In addition to being compatible with any input and kernel size, SIPP2D architecture can be modified to support any allowable stride. We describe the data flow and algorithmic modifications to SIPP2D which extends its capabilities to accommodate multi-stride convolutions. Supporting multi-stride convolutions is an essential feature addition to SIPP2D architecture, increasing its versatility and network agnostic character for convolutional type DNNs. Along with architectural explorations, we have also performed research in the area of model optimization. It is widely understood that any change on the algorithmic level of the network pays significant dividends at the hardware level. Compression and optimization techniques such as pruning and quantization help reduce the size of the model while maintaining the accuracy at an acceptable level. Thus, by combining techniques such as channel pruning with SIPP2D we can only boost its performance. In this thesis, we examine the performance of channel pruned SIPP2D compared to other compressed models.

Traditionally, quantization of weights and inputs are used to reduce the memory transfer and power consumption. However, quantizing the outputs of layers can be a challenge since the output of each layer changes with the input. In our research, we use quantization on the output of each layer for AlexNet and VGGNet-16 to analyze the effect it has on accuracy. We use Signal to Noise Quantization Ratio (SQNR) to empirically determine the integer length (IL) as well as the fractional length (FL) for the fixed point precision that can yields the lowest SQNR and highest accuracy. Based on our observations, we can report that accuracy is sensitive to fractional length as well as integer length. For AlexNet, we observe deterioration in accuracy as the word length decreases. The Top -5 accuracy goes from 77% for floating point precision to 56% for a WL of 12 and FL of 8. The results are similar in the case of VGGNet-16. The Top-5 accuracy for VGGNet-16 decreases from 82% for floating point to 30% for a WL of 12 and FL of 8. In addition to the small word length, we observe the accuracy to be highly dependent on the integer length as well as the fractional length. We have also done analysis on the loss after retraining post quantization. We use polynomial fitting to achieve a relationship with fractional length and the drop in accuracy still sustained after retraining a quantized network.

In summary, the winning combination of the enhanced SIPP2D architecture and compression techniques such as channel pruning and quantization techniques is highly advantageous and conducive to widespread adoption. SIPP2D architecture, with its flexible data flow and algorithmic modifications to support multi-stride convolutions, offers a powerful and versatile framework for deep neural networks.

Recommended Citation

Ansari, Anaam, "Efficient Hardware Implementation of Deep Learning Networks Based on the Convolutional Neural Network" (2023). Engineering Ph.D. Theses . 49. https://scholarcommons.scu.edu/eng_phd_theses/49

Since August 01, 2023

Included in

Electrical and Computer Engineering Commons

- Collections

- Disciplines

Advanced Search

- Notify me via email or RSS

Author Corner

- Santa Clara University

- University Library

Home | About | FAQ | My Account | Accessibility Statement

Privacy Copyright

Deep learning based image super-resolution with adaption and extension of convolutional neural network models

Downloadable content.

- Khanh, Ha Viet

- Strathclyde Thesis Copyright

- University of Strathclyde

- Doctoral (Postgraduate)

- Doctor of Philosophy (PhD)

- Centre for Excellence in Signal Image Processing

- Department of Electronic and Electrical Engineering

- Image super-resolution is the process of creating a high-resolution image from a single or multiple low-resolution images. As one low-resolution image can yield several possible solutions for high-resolution images, image super-resolution is an ill-posed reversed problem. Deep learning-based approaches have recently emerged and blossomed, producing state-of-the-art results in image, language, and speech recognition areas. Thanks to the capability of feature extraction and mapping, it is very helpful to predict the details lost in the low-resolution image. In real-world problems, however, there are many existing factors that significantly affect the super-resolution results, including the model design, characteristics of a low-resolution image, and how features are exploited or combined from given data. This thesis focuses on improving the quality of image reconstruction using CNN based models by tackling three problems or weaknesses in existing models and algorithms. First, the commonly used skip connection proposed in ResNet lacks discriminative learning ability for image super-resolution. It ignores the fact that natural images have a lot of structure, i.e., strong correlations between neighbouring pixels, and some information is more important to predict HR images than others. The second problem that appears in image fusion CNN-based models is inadequately fusing features from multiple sources as well as a lack of regularisation for improving the generality of fusion-based models. Finally, a gradient regularisation approach has recently been proposed to improve the convergence of GAN but has shown instability during training. Hence, addressing this issue of instability in this method will contribute to a super-resolution area that incorporates GAN. Initially, contributions are introduced for a single image super-resolution using a novel highway connection-based architecture. The new highway connection, which composes of a non-linear gating mechanism, has efficiently learned different hierarchical features and recovered much more details in pixel-wise based image reconstruction. Besides, the introduced highway connection-based model can achieve faster and better convergence, which is less prominent in training problems than those using common skip connections in the well-known residual neural networks. Second, a deep learning-based framework has been developed for enhancing the spatial resolution of the low-resolution hyperspectral image (Lr-HSI) by fusing it with the high resolution multispectral image (Hr-MSI). To tackle the existing discrepancy in spectrum range and spatial dimensions, multi-scale fusion is proposed to efficiently address the disparity in spatial resolution between two source inputs. Furthermore, an auxiliary unsupervised task is proposed, which acts as an additional form of regularisation to further improve the generalisation performance of the supervised task.Finally, the parameter-free framework that adaptively adjusts the strength of gradient regularisation is proposed to improve the stability and performance of Generative Adversarial Networks. The method proposes automatically differentiating the strength of the regulariser based on the difference in the discriminator's behaviour off the convergence point.In summary, the outcome of this thesis makes contributions to the deep learning-based super-resolution community by proposing one architecture for single image super resolution, one fusion-based framework for HSI super-resolution and one adaptive method for gradient regularisation in the Generative Adversarial Network. The novelty and robustness of the proposed methods have been fully demonstrated by extensive experiments. The quantitative results are compared to the state-of-the-art, and thus give the potential to many users of signal and image analysis to improve the resolution of their final outputs.

- Marshall, Stephen, 1958-

- Ren, Jinchang

- Doctoral thesis

- 10.48730/zhyz-a563

- Government of Vietnam

| Thumbnail | Title | Date Uploaded | Visibility | Actions |

|---|---|---|---|---|

| 2023-05-11 | Public |

Disclaimer/Complaints regulations

If you believe that digital publication of certain material infringes any of your rights or (privacy) interests, please let the Library know, stating your reasons. In case of a legitimate complaint, the Library will make the material inaccessible and/or remove it from the website. Please Ask the Library , or send a letter to: Library of the University of Amsterdam, Secretariat, Singel 425, 1012 WP Amsterdam, The Netherlands. You will be contacted as soon as possible.

Publications

- Code (Github)

- Code (char-rnn)

- Retrieval demo

- Presentation

- Supplemental

- Web Demo of Results

- PDF, Code, Data

Pet Projects

Application of convolutional neural networks in object detection, re-identification and recognition

Jazan university (jazan, saudi arabia).

- Computer Science

Rights holder

Publication date, supervisor(s), qualification name, qualification level, this submission includes a signed certificate in addition to the thesis file(s).

- I have submitted a signed certificate

Administrator link

- https://repository.lboro.ac.uk/account/articles/13014155

Usage metrics

- Other information and computing sciences not elsewhere classified

PhD thesis in Applied Physics

Convolution function.

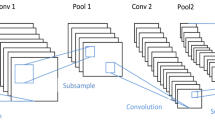

A big revolution into the Neural Network research field was given by the introduction of the convolution functions. Convolutional Neural Network (CNN) are particularly designed for image analysis. Convolution is the mathematical integration of two functions in which the second one is translated by a given value:

In signal processing this operation is also called crossing correlation ad it is equivalent to the autocorrelation function computed in a given point. In image processing the first function is represented by the image I and the second one is a kernel k (or filter) which shifts along the image. In this case we will have a 2D discrete version of the formula given by:

where C[i, j] is the pixel value of the resulting image and N , M are kernel dimensions.

The use of CNN in modern image analysis applications can be traced back to multiple causes. First of all the image dimensions are increasingly bigger and thus the number of variables/features, i.e pixels, is often too big to manage with standard DNN 1 . Moreover if we consider detection problems, i.e the problem of detecting a set of features (or an object) inside a larger pattern, we want a system ables to recognize the object regardless of where it appears into the input. In other words, we want that our model would be independent by simple translations.

Both the above problems can overcome by CNN models using a small kernel, i.e weight mask, which maps the full input. A CNN is able to successfully capture the spatial and temporal dependencies in an signal through the application of relevant filters.

The main parameter of this function are so given by the input dimensions and the filter/kernel dimensions, i.e the number of weight which we have to tune during the training. This is the basic idea behind the convolution function but in many cases (especially in modern deep learning neural network) we can sophisticate it playing with the possible movements of the filter mask. In particular, aside the kernel mask-size, we can also force the filter to jump along the image, i.e a discontinuous movement of the filter excluding some pixels. This parameter, called stride , defines the number of pixels to jump and it is often used to reduce further the output dimensions.

Given this theoretical background we can implement the convolution function in many different ways, using different mathematical approaches: a study on the computational efficiency will tell us which is the best approach to choose. The first (naive) approach is to use a brute force technique and implement the direct evaluation of the convolution functions as described above. This version is certainly the easier to implement but its computational performances are so worst than for sake of brevity we excluded it from our tests 2 .

Taking into account what we have learned from the DNN models, we can re-formulate our problem using an efficient manipulation of the involved matrices to optimize the GEMM algorithm. A direct convolution on an image of size (W x H x C) using a kernel mask of dimensions (k x k) requires O(WHCk^2) operations and thus many matrix products. We can re-arrange the involved data to optimize this computation and thus evaluate a single matrix product: this re-arrangement is called im2col (or im2row ) algorithm. The algorithm is just a simple transformation which flats the original input into a bigger matrix where each column carries all the elements which have to be multiplied for the filter mask into a single step 3 . In this way we can immediately apply our GEMM algorithm on the full image. In Fig. 1 the main scheme of this algorithm is reported. This kind of algorithm certainly optimize the computation efficiency of the GEMM product but in payback we have to store a lot of memory for the input re-organization.

Using the mathematical theory behind the problem a third idea can arise using the well known Convolution Theorem: the Fourier transformation of our functions (that in this case are given by the input image and the weights kernel) can be reinterpreted into a simple matrix product in the frequency space. This is certainly the most “physical” approach to solve this problem and probably the easier one since the Fourier transformation is a well-known optimized algorithm and many efficient implementations are already provided in literature. One of the most efficient one is provided by the FFTW ( Fast Fourier Transform in the West ) library [ FFTW05 ]: the FFTW3 is an open source C subroutine library for computing the discrete Fourier transform (DFT) in multiple dimensions without constrains in input sizes or data types. The library is not only accurate in the computation but it also provide an efficient parallel version for multi-threading applications.

A further implementation kind is given by linear algebra considerations (very closed to numerical considerations) and it is called Coppersmith-Winograd algorithm. This algorithm was designed to optimize the matrix product and in particular to reduce the computational cost of its operations. Suppose we have an input image given by just 4 elements and a filter mask with size equal to 3:

we can now using the told above im2col algorithm and thus reshape our input image and weights into

given this data we can simply compute the output as the matrix product of this two matrices. The Winograd algorithm rewrites this computation as follow:

We tested the computational time efficiency of each algorithm on different random images. The tests were performed on a classical bioinformatics server (128 GB RAM memory and 2 CPU E5-2620, with 8 cores each) and we considered only kernel sizes equal to 3 ( Winograd constrain) varying the input dimensions and the number of filters. In Fig. 1 we show the result of our simulations using the im2col values as reference 6 .

In all our simulations we found a visible speedup using the Winograd algorithm against the other two algorithms: for small dimensions we obtain more than 5x against the im2col and 25x against the fftw implementation. The worst algorithm is certainly the fftw one which, despite the efficient FFTW3 parallel-library, is always more than 5 times slower than the reference. However, it is interesting notice how the fftw implementation is able to reach the best performances when the dimensions are proportional to powers of 2, as expected from the mathematical theory behind the Discrete Fourier Transformation.

We can conclude that the Winograd algorithm is certainly the best choice when we have to perform a 2D convolution. The payback of this method is given by the rigid constrains related to the mask sizes and strides: when it is possible it remains the best solution but in all the other cases the im2col implementation is a relatively good alternative. The efficiency of Byron library follows the efficiency of the Winograd algorithm since the major part of layers in modern deep learning Neural Network models are Convolutional layers with size equal to 3 and unitary stride.

If we consider a simple image 224 x 224 with 3 color channels we obtain a set of 150'528 features. A classical DNN layer with this input size should have 1024 nodes for a total of more than 150 million weights to tune. ↩

Compared to the other implementations the direct (brute force) convolution algorithm exceeds the computational time of order of magnitudes. For this reason it is not taken into account during our tests. A possible implementation in C++ is however provided into the Byron library . ↩

We work under the assumption that the weights matrix is already a flatten array and thus each row of the weights matrix represents the full mask. ↩

A multiplication takes 7 clock cycles in a normal CPU while an add takes only 3 clock cycles. ↩

We would also highlight that this formulation is valid only if we consider unitary strides. ↩

The im2col algorithm can be found in the major part of Neural Network library and it is also the only convolution function implemented in the darknet library, which is a sort of reference for our work. ↩

Maurice Weiler

Deep learning researcher.

My name is Maurice Weiler and I’m a researcher working on geometric deep learning.

Education : I received my PhD from the University of Amsterdam, supervised by Max Welling . Prior to my doctorate, I obtained a Master's degree in computational and theoretical physics from Heidelberg University. For more details, have a look at my CV .

Research : My research deals with incorporating geometric inductive priors into deep neural networks.

A primary focus of my work is on the design of Equivariant Convolutional Neural Networks (CNNs). These are geometry-aware neural networks that are constrained to commute with geometric transformations of feature vector fields. Equivariance ensures consistent predictions across transformed inputs, which leads to a greatly enhanced data efficiency, robustness and interpretability.

During my PhD, I developed a quite general representation theoretic formulation of equivariant CNNs , applying to a wide range of spaces, symmetry groups and group actions. My research showed that equivariant convolutions generally require $G$-steerable kernels , which are symmetry-constrained convolution kernels that are related to quantum representation operators . The endeavor to generalize CNNs to Riemannian manifolds led me to a gauge field theory of convolutional networks which constitutes a paradigm shift from global symmetries to local gauge transformations. Our PyTorch library escnn found use in a variety of applications, ranging from biomedical and satellite image processing to tasks in environmental, chemical and material sciences, or in reinforcement learning and robotics.

If you would like to learn more about my research on equivariant CNNs, please have a look at our book "Equivariant and Coordinate Independent CNNs" and check out my blog posts and publications .

Further interests : Besides my core research topics, I am more broadly interested in generative models, graph neural networks, non-Euclidean embedding methods, PDE-inspired deep models, and deep learning for the physical sciences.

In my freetime I enjoy climbing, hiking, MTBing, cooking, playing strategic games and DJing.

Book publication + PhD Thesis + PhD Thesis

Equivariant and coordinate independent convolutional networks, a gauge field theory of neural networks.

This monograph brings together our findings on the representation theory and differential geometry of equivariant CNNs that we have obtained in recent years. It generalizes previous results, presents novel insights, and adds background knowledge, intuitive explanations, visualizations and examples.

The version linked above is a pre-release of my PhD thesis. A printed edition from an academic publisher will soon be available as well.

- The first part develops the representation theory of equivariant CNNs on Euclidean spaces . It demonstrates how the layers of conventional and generalized CNNs are derived purely from symmetry principles. Popular models – including group convolutional networks, harmonic networks, and tensor field networks – are unified in a common mathematical framework.

- We subsequently extended this formulation to Riemannian manifolds . The requirement for coordinate independence is shown to result in gauge symmetry constraints on the neural connectivity. Part 2 of the book gives an intuitive introduction to CNNs on manifolds. Part 3 formalizes these ideas in the language of associated fiber bundles.

- The fourth part is devoted to applications and instantiations on specific manifolds. It revisits Euclidean CNNs from the differential geometric perspective and covers spherical CNNs and CNNs on general surfaces.

For more details, see the table of contents , read the abstract , intro and outline , or have a look at the list of theorems . Reveal the book's back cover by clicking on its image above. on the left. You should also check out the associated series of blog posts , which explains key concepts and insights in an easily understandable language.

Publications

Google Scholar

Navigation Menu

Search code, repositories, users, issues, pull requests..., provide feedback.

We read every piece of feedback, and take your input very seriously.

Saved searches

Use saved searches to filter your results more quickly.

To see all available qualifiers, see our documentation .

- Notifications You must be signed in to change notification settings

This is the development code for the doctoral thesis - DEVELOPMENT OF DEEP TRANSFER LEARNING VIA ARTIFICIAL NEURAL NETWORKS IN MODELING THE INDEX OF SPEECH TRANSMISSION -Convolutional Variational AutoEncoder (CVAE) and Deep Transfer Learning with a custom loss function.

EribertoO/PhD-Thesis-Variational-Autoencoder-with-Transfer-Learning

Folders and files.

| Name | Name | |||

|---|---|---|---|---|

| 7 Commits | ||||

Repository files navigation

Phd thesis: variational autoencoder with transfer learning.

This is the development code repo for the my doctoral thesis entitled:

DEVELOPMENT OF DEEP TRANSFER LEARNING VIA ARTIFICIAL NEURAL NETWORKS IN MODELING THE INDEX OF SPEECH TRANSMISSION -

Thesis Proposal - Deep Convolutional Neural Network for Speech Transmission Index Prediction in Classroom Acoustics This repository contains the code and resources for my thesis proposal on the topic of "Deep Convolutional Neural Network for Speech Transmission Index Prediction in Classroom Acoustics". The proposal is supervised by the following professors:

Prof. Dr.-Ing. Paulo Henrique Trombetta Zannin Department of Mechanical Engineering, Federal University of Paraná (UFPR)

Prof. Dr. Nilson Barbieri School of Engineering, Pontifical Catholic University of Paraná (PUCPR)

Prof. Ph.D. Arcanjo Lenzi Department of Mechanical Engineering, Federal University of Santa Catarina (UFSC)

Prof. Ph.D. Eduardo Márcio de Oliveira Lopes Department of Mechanical Engineering, Federal University of Paraná (UFPR)

The acoustic comfort in classrooms is a fundamental element that influences the teaching-learning dynamics. Therefore, classrooms with deficient acoustics can have socioeconomic implications for an entire society, as the pedagogical function loses its efficiency. The intelligibility of speech is typically measured using the Speech Transmission Index (STI), which quantifies the speech transmission quality. However, the measurement of STI is complex and requires expensive instrumentation.

Existing literature includes predictive models of STI that utilize the Reverberation Time (RT) as a regression variable. However, these models do not consider the spectral effects of the dual time-frequency localization of the room's impulse response signal, as well as the spectral content of background noise. Consequently, this research aims to model STI by applying a deep one-dimensional convolutional neural network.

The neural network model consists of two inputs: the simulated impulse response obtained through the Virtual Source Method with 10,000 samples, and the background noise with 564 samples. The training target is the predictive STI provided by the IEC 60268-16 standard. Due to the challenge of acquiring in-situ measurements, and to enhance the estimation robustness of the neural network, a novel method called Projective-Adaptive Variational Minimization Transfer Learning (MVPAnP) is proposed. MVPAnP optimizes the pretrained neural network models with simulated data and transfers the learned generalization to a dataset of in-situ measurements.

The model's validation was performed based on experimental acquisition of 60 classrooms with only RT measurements and 15 classrooms with both STI and RT measurements. The model's quality was evaluated using a calibration curve to experimentally validate its performance. The expected results include the development of a novel modeling approach for STI prediction compared to existing models, taking into account the cost and budgetary constraints associated with STI measurement instrumentation.

In conclusion, the proposed model can be used as a support tool for diagnostic evaluation of STI in classrooms, utilizing only the instrumentation typically employed for RT measurements.

- Jupyter Notebook 96.1%

- Python 3.9%

Integrating self-organizing feature map with graph convolutional network for enhanced superpixel segmentation and feature extraction in non-Euclidean data structure

- Published: 24 June 2024

Cite this article

- Yi-Zeng Hsieh ORCID: orcid.org/0000-0002-3501-3982 1 ,

- Chia-Hsuan Wu 1 &

- Yu-Ting Chen 1

Explore all metrics

Deep learning has been widely used on Euclidean data type, and the deep learning architecture has made a breakthrough by the development of technology. The common neural network architectures include Deep Neural Network (DNN), Convolutional Neural Network (CNN) and Long-short Term Memory (LSTM). The achievements of these models have above the standard. But in various fields not all data can be shown by Euclidean data type, so Graph Convolutional Network (GCN) was proposed to solve this problem. GCN is applied to non-Euclidian data structure and presents in the graph data type, which is composed of nodes and edges, such as chemical compound, a subset of the web. The graph data type can be able the relationship between nodes and nodes, making it not lose the important features. Therefore, our paper converts the image into graph data type to retain the complete feature information of image, which is different from CNN requiring multiple convolution layers of different dimensions to retain the features information of image. In the paper, we use the superpixel segmentation algorithm to convert the image to the graph data type. The problem of superpixel block disappearance is prone to occur in the previous superpixel algorithm, and the missing block must be used with zero-padding to correct the dimensional error. The purpose of this thesis is to propose the Self-Organizing Feature Map (SOM) for superpixel segmentation combined with graph convolutional network to solve the problem of incorrect feature extraction caused by superpixel segmentation algorithm. Most of the superpixel segmentation algorithm uses the RGB or CIELAB color space to segment the pixels in the image, which is unexplainable features. Therefore, in this paper combins with image processing to explain the feature meaning and proposed the explainable features with the graph data type.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

Object detection using YOLO: challenges, architectural successors, datasets and applications

YOLO-based Object Detection Models: A Review and its Applications

Convolutional neural network: a review of models, methodologies and applications to object detection

Masoum S, Malabat C, Jalali-Heravi M, Guillou C, Rezzi S, Rutledge D (2007) Application of support vector machines to 1h nmr data of fish oils: methodology for the confirmation of wild and farmed salmon and their origins. Anal Bioanal Chem 387:1499–510. https://doi.org/10.1007/s00216-006-1025-x

Article Google Scholar

Liao B-K, Goh AP, Lio CI, Hsiao H-I (2024) Kinetic models applied to quality change and shelf-life prediction of fresh-cut pineapple in food cold chain. Food Chem 437:137803. https://doi.org/10.1016/j.foodchem.2023.137803

Semyalo D, Kwon O, Wakholi C, Min HJ, Cho B-K (2024) Nondestructive online measurement of pineapple maturity and soluble solids content using visible and near-infrared spectral analysis. Postharvest Biol Technol 209:112706. https://doi.org/10.1016/j.postharvbio.2023.112706

Malik R (2003) Learning a classification model for segmentation. In: Proceedings ninth IEEE international conference on computer vision, pp 10–171. https://doi.org/10.1109/ICCV.2003.1238308

Achanta R, Shaji A, Smith K, Lucchi A, Fua P, Süsstrunk S (2012) Slic superpixels compared to state-of-the-art superpixel methods. IEEE Trans Pattern Anal Mach Int 34(11):2274–2282. https://doi.org/10.1109/TPAMI.2012.120

Li Z, Chen J (2015) Superpixel segmentation using linear spectral clustering. In: 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 1356–1363. https://doi.org/10.1109/CVPR.2015.7298741

Achanta R, Süsstrunk S (2017) Superpixels and polygons using simple non-iterative clustering. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 4895–4904. https://doi.org/10.1109/CVPR.2017.520

Twogood RE, Sommer FG (1982) Digital image processing. IEEE Trans Nucl Sci 29(3):1075–1086. https://doi.org/10.1109/TNS.1982.4336327

Douglas DH, Peucker TK (1973) Algorithms for the reduction of the number of points required to represent a digitized line or its caricature. Cartographica: Int J Geographic Inform Geovisualization 10:112–122

Le CV, Hong QN, Quang TT, Trung ND (2016) Superpixel-based background removal for accuracy salience person re-identification. In: 2016 IEEE International Conference on Consumer Electronics-Asia (ICCE-Asia), pp 1–4. https://doi.org/10.1109/ICCE-Asia.2016.7804806

Giraud R, Ta V-T, Papadakis N (2017) Superpixel-based color transfer. In: 2017 IEEE International Conference on Image Processing (ICIP), pp 700–704. https://doi.org/10.1109/ICIP.2017.8296371

Almero VJD, Alejandrino JD, Bandala AA, Dadios EP (2020) Segmentation of aquaculture underwater scene images based on slic superpixels merging-fast marching method hybrid. In: 2020 IEEE REGION 10 CONFERENCE (TENCON), pp 432–437. https://doi.org/10.1109/TENCON50793.2020.9293806

Andrew A (2000) Level set methods and fast marching methods: evolving interfaces in computational geometry, fluid mechanics, computer vision, and materials science, by j.a. sethian. Robotica 18:89–92. https://doi.org/10.1017/S0263574799212404

Forcadel N, Guyader C, Gout C (2008) Generalized fast marching method: applications to image segmentation. Numer Algo 48:189–211. https://doi.org/10.1007/s11075-008-9183-x

Article MathSciNet Google Scholar

Kohonen T (1990) The self-organizing map. Proc IEEE 78(9):1464–1480. https://doi.org/10.1109/5.58325

Lecun Y, Bottou L, Bengio Y, Haffner P (1998) Gradient-based learning applied to document recognition. Proc IEEE 86(11):2278–2324. https://doi.org/10.1109/5.726791

Krizhevsky A, Sutskever I, Hinton GE (2017) Imagenet classification with deep convolutional neural networks. Commun ACM 60(6):84–90. https://doi.org/10.1145/3065386

Liu S, Deng W (2015) Very deep convolutional neural network based image classification using small training sample size. In: 2015 3rd IAPR Asian Conference on Pattern Recognition (ACPR), pp 730–734. https://doi.org/10.1109/ACPR.2015.7486599

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 770–778. https://doi.org/10.1109/CVPR.2016.90

Nazir A, Wani MA (2023) You only look once - object detection models: a review. In: 2023 10th International conference on computing for sustainable global development (INDIACom), pp 1088–1095

Zhao Z, Fang H, Jin Z, Qiu Q (2020) Gisnet: graph-based information sharing network for vehicle trajectory prediction. In: 2020 International Joint Conference on Neural Networks (IJCNN), pp 1–7. https://doi.org/10.1109/IJCNN48605.2020.9206770

Zhao L, Song Y, Zhang C, Liu Y, Wang P, Lin T, Deng M, Li H (2020) T-gcn: a temporal graph convolutional network for traffic prediction. IEEE Trans Intell Transp Syst 21(9):3848–3858. https://doi.org/10.1109/TITS.2019.2935152

Lo L, Xie H-X, Shuai H-H, Cheng W-H (2020) Mer-gcn: micro-expression recognition based on relation modeling with graph convolutional networks. In: 2020 IEEE Conference on Multimedia Information Processing and Retrieval (MIPR), pp 79–84. https://doi.org/10.1109/MIPR49039.2020.00023

Liu Z, Jiang Z, Feng W, Feng H (2020) Od-gcn: object detection boosted by knowledge gcn. In: 2020 IEEE International Conference on Multimedia and Expo Workshops (ICMEW), pp 1–6. https://doi.org/10.1109/ICMEW46912.2020.9105952

Martin D, Fowlkes C, Tal D, Malik J (2001) A database of human segmented natural images and its application to evaluating segmentation algorithms and measuring ecological statistics. In: Proceedings eighth IEEE international conference on computer vision. ICCV 2001, vol 2, pp 416–4232. https://doi.org/10.1109/ICCV.2001.937655

Jiang B, Zhang Z, Lin D, Tang J, Luo B (2019) Semi-supervised learning with graph learning-convolutional networks. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 11305–11312. https://doi.org/10.1109/CVPR.2019.01157

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R (2014) Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res 15(1):1929–1958

MathSciNet Google Scholar

Xhaferra E, Cina E, Toti L (2022) Classification of standard fashion mnist dataset using deep learning based cnn algorithms. In: 2022 International Symposium on Multidisciplinary Studies and Innovative Technologies (ISMSIT), pp 494–498. https://doi.org/10.1109/ISMSIT56059.2022.9932737

Rusch TK, Bronstein MM, Mishra S (2023) A survey on oversmoothing in graph neural networks. arXiv:2303.10993

Keriven N (2022) Not too little, not too much: a theoretical analysis of graph (over)smoothing. arXiv:2205.12156 [stat.ML]

Gao X-Y, Yuan Q-X, Zhang C-X (2022) 3d model classification based on gcn and svm. IEEE Access 10:121494–121507. https://doi.org/10.1109/ACCESS.2022.3223384

Maturana D, Scherer S (2015) Voxnet: a 3d convolutional neural network for real-time object recognition. In: 2015 IEEE/RSJ International conference on Intelligent Robots and Systems (IROS), pp 922–928. https://doi.org/10.1109/IROS.2015.7353481

Xie H, Yao H, Zhou S, Zhang S, Tong X, Sun W (2021) Toward 3d object reconstruction from stereo images. Neurocomputing 463:444–453. https://doi.org/10.1016/j.neucom.2021.07.089

Download references

Acknowledgements

This study was partly supported by the National Science and Technology Council (NSTC), Taiwan, under NSTC 111-2221-E-011 -162 -MY3 and 111-2221-E- 011 -163 -MY3.

Author information

Authors and affiliations.

Department of Electrical Engineering, National Taiwan University of Science and Technology, Taipei, Taiwan

Yi-Zeng Hsieh, Chia-Hsuan Wu & Yu-Ting Chen

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Yi-Zeng Hsieh .

Ethics declarations

Conflict of interest.

The authors declare that they have no conflict of interest.

Data Sharing

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

Reprints and permissions

About this article

Hsieh, YZ., Wu, CH. & Chen, YT. Integrating self-organizing feature map with graph convolutional network for enhanced superpixel segmentation and feature extraction in non-Euclidean data structure. Multimed Tools Appl (2024). https://doi.org/10.1007/s11042-024-19619-5

Download citation

Received : 21 December 2023

Revised : 21 May 2024

Accepted : 06 June 2024

Published : 24 June 2024

DOI : https://doi.org/10.1007/s11042-024-19619-5

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Image segmentation

- Self-organizing feature map

- Graph convolutional network

Advertisement

- Find a journal

- Publish with us

- Track your research

IMAGES

VIDEO

COMMENTS

agnosis systems. More specifically, the thesis provides the following three main contributions. First, it introduces a novel entropy-based elastic ensemble of Deep Convolutional Neural Networks (DCNNs) architecture termed as 3E-Net for classi-fying grades of invasive breast carcinoma microscopic images. 3E-Net is based on a

Oulu University of Applied Sciences Information Technology, Internet Services. Author: Hung Dao Title of the bachelor's thesis: Image Classification Using Convolutional Neural Networks Supervisor: Jukka Jauhiainen Term and year of completion: Spring 2020 Number of pages: 31. The objective of this thesis was to study the application of deep ...

Next, Artificial Neural Networks (ANN), which works as a stepping stone to deep learning, types of ANN methods, and their limitations are explored. Chapter three focuses on deep learning and four of its main architectures including unsupervised pretrained networks, recurrent neural network, recursive neural network, and convolutional neural ...

The publications below describe work that is loosely related to this thesis but not described in the thesis: ImageNet Classification with Deep Convolutional Neural Networks Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton. In Advances in Neural Information Pro-cessing Systems 26, (NIPS*26), 2012. (Krizhevsky et al., 2012)

uses a speci c model called a neural network [2]. What follows in this thesis is an introduction to supervised learning, an introduction to neural networks, and my work on Convolutional Neural Networks, a speci c class of neural networks. 1.2 Supervised Learning

Chapter 2 of this thesis will present a literature review about the convolutional neural network. I shall present some techniques that increase the accuracy for Convolutional Neural Networks (CNNs). To test system performance, the Modified NIST or MNIST dataset demonstrated in [1] was chosen.

Image classification, speech processing, autonomous driving, and medical diagnosis have made the adoption of Deep Neural Networks (DNN) mainstream. Many deep networks such as AlexNet, GoogleNet, ResidualNet, MobileNet, YOLOv3 and Transformers have achieved immense success and popularity. However, implementing these deep and complex networks in hardware is a challenging feat. The growing demand ...

In this thesis, we study the topic of deep learning with a focus on image recog-nition using convolutional neural networks. We cover the various components of deep learning, including the network structure, backpropagation and stochastic gradient descent. We explain the fundamentals of these components and com-pare theory to practice.

Deep convolutional neural networks (CNNs) recently have shown remarkable success in a variety of areas such as computer vision [1-3] and natural language processing [4-6]. CNNs are biologically inspired by the structure of mammals' visual cortexes as presented in Hubel and Wiesel's model [7]. In 1998, LeCun et al. followed

Deep learning based image super-resolution with adaption and extension of convolutional neural network models. ... (PhD) Department, School or Faculty. ... This thesis focuses on improving the quality of image reconstruction using CNN based models by tackling three problems or weaknesses in existing models and algorithms. First, the commonly ...

In this thesis we explore ways to leverage symmetries to improve the ability of convolutional neural networks to generalize from relatively small samples. We argue and show empirically that in the context of deep learning it is better to learn equivariant rather than invariant representations, because invariant ones lose information too early ...

This dissertation addresses these challenges and presents novel deep convolutional neural network (CNN) techniques for two different medical applications. In addressing the first application of ...

Convolutional Neural Network Architectures Master Thesis of Martin Thoma Department of Computer Science Institute for Anthropomatics and FZI Research Center for Information Technology Reviewer: Prof. Dr.-Ing. R. Dillmann Second reviewer: Prof. Dr.-Ing. J. M. Zöllner Advisor: Dipl.-Inform. Michael Weber Research Period: 03. May 2017 ...

this thesis we explore ways to leverage symmetries to improve the ability of convolutional neural networks to generalize from relatively small samples. We argue and show empirically that in the context of deep learning it is bet-ter to learn equivariant rather than invariant representations, because invari-

PhD Thesis, 2016. DenseCap: Fully Convolutional Localization Networks for Dense Captioning ... Sports-1M: a dataset of 1.1 million YouTube videos with 487 classes of Sport. This dataset allowed us to train large Convolutional Neural Networks that learn spatio-temporal features from video rather than single, static images. Andrej Karpathy ...

This thesis proposes an approximate nearest neighbor-based method for one-shot learning. This. method makes use of the features produced by a pretrained convolutional neural network and builds a. proximity forest to classify new classes. The algorithm is tested in two datasets with differetttiCVUCCbDDDDGDiODDOnt.

Automated Pavement Condition Assessment using Unmanned Aerial Vehicles (UAVs) and Convolutional Neural Network (CNN) by Vinay K. Chawla May, 2021 Director of Thesis: Carol Massarra, PhD Major Department: Construction Management. ial in any effort to reduce futureeconomic losses and improve the str. ctural reliability and resil.

Convolutional Neural Network (CNN) is arguably the most utilized model by the computer vision community, which is reasonable thanks to its remarkable performance in object and scene recognition, with respect to traditional hand-crafted features. Nevertheless, it is evident that CNN naturally is availed in its two-dimensional version.

MSc THESIS Exploring Convolutional Neural Networks on the ˆ-VEX architecture Jonathan Tetteroo Abstract Faculty of Electrical Engineering, Mathematics and Computer Science CE-MS-2018-10 As machine learning algorithms play an ever increasing role in to-day's technology, more demands are placed on computational hard-

This thesis investigates the effective deployment of deep Convolutional Neural Networks (CNNs) architectures in two different application areas for security and surveillance purposes, namely person re-identification and aerial, large-scale, object detection and recognition, in which the data capture sources are cameras within CCTV systems and on-board drones, respectively. First of all, person ...

Convolutional Neural Network (CNN) are particularly designed for image analysis. Convolution is the mathematical integration of two functions in which the second one is translated by a given value: In signal processing this operation is also called crossing correlation ad it is equivalent to the autocorrelation function computed in a given point.

Research: My research deals with incorporating geometric inductive priors into deep neural networks. ... The version linked above is a pre-release of my PhD thesis. A printed edition from an academic publisher will soon be available as well. ... including group convolutional networks, harmonic networks, and tensor field networks - are unified ...

This is the development code for the doctoral thesis - DEVELOPMENT OF DEEP TRANSFER LEARNING VIA ARTIFICIAL NEURAL NETWORKS IN MODELING THE INDEX OF SPEECH TRANSMISSION -Convolutional Variational AutoEncoder (CVAE) and Deep Transfer Learning with a custom loss function. - GitHub - EribertoO/PhD-Thesis-Variational-Autoencoder-with-Transfer-Learning: This is the development code for the doctoral ...

Deep learning has been widely used on Euclidean data type, and the deep learning architecture has made a breakthrough by the development of technology. The common neural network architectures include Deep Neural Network (DNN), Convolutional Neural Network (CNN) and Long-short Term Memory (LSTM). The achievements of these models have above the standard. But in various fields not all data can be ...